Extract Competitor Pricing Data with Web Scraping | 2026

Feb 17, 2026

Web Scraping

AI Automation

E-commerce

Market Intelligence

Web Scraping

AI Automation

E-commerce

Market Intelligence

In the competitive landscape of late 2026, the ability to extract competitor pricing data with web scraping has become the baseline for operational survival. Recent industry reports indicate that companies implementing dynamic pricing strategies based on real-time competitor data see a measurable sales growth improvement between 2% and 5%. This is not merely about matching a lower price; it is about comprehending the elasticity of your market and knowing exactly when you can command a premium using automated daily market intelligence. As we move through this year, the global competitive intelligence market is on track to reach a significant $20.2 billion valuation, with pricing analysis standing as the single largest investment area for enterprise-level firms.

The reality of manual research has become a liability for growth-oriented teams. Leading retailers who have transitioned to automated pricing tools have successfully slashed their manual research hours by over 80%. More importantly, they have eliminated the human error inherent in copying data from thousands of browser tabs into aging spreadsheets. When you extract competitor pricing data with web scraping, you are moving from a reactive "guess-and-check" model to a proactive, data-driven engine. This shift allows your sales team to stop acting as data entry clerks and start acting as strategic closers by eliminating manual data entry via automation.

At Botomation, we observe this transition daily. Our team of experts doesn't just provide a dashboard login; we build custom intelligence systems that integrate directly into your existing workflow. The goal is to provide a "morning report" that dictates strategy, rather than a list of numbers that requires further interpretation. In the following sections, we will break down the mechanics of these systems, the legal framework surrounding them, and the massive ROI realized by firms that have abandoned traditional manual monitoring.

Understanding How to Extract Competitor Pricing Data with Web Scraping

Pricing is the most powerful lever a business can pull to influence its bottom line. Yet, most companies treat it as a static element, updated once a quarter or only when a salesperson reports a lost deal. To extract competitor pricing data with web scraping effectively, one must first recognize that pricing is a living organism. Competitors change their rates based on inventory levels, time of day, and even the browsing history of the user. If you are checking prices manually once a week, you are missing 95% of the market story.

The challenge of manual monitoring is its inability to scale. A human can perhaps track 50 products across three competitors with some degree of accuracy. But what happens when you have 5,000 SKUs and twenty competitors? The system inevitably breaks. Data becomes stale before it is even formatted. Furthermore, manual research is prone to "blind spots" where regional pricing or flash sales go unnoticed. Automated extraction solves this by providing a high-frequency, comprehensive view of the entire market landscape simultaneously.

The Business Case for Pricing Intelligence

Suboptimal pricing is a silent profit killer, though custom web development for marketing can provide the infrastructure needed to reclaim these lost margins. If your price is 2% higher than the market equilibrium without a clear value proposition, your conversion rate drops. If it is 2% lower than it needs to be, you are leaving pure margin on the table. When we look at the revenue implications, the numbers are stark. For a company with $10 million in annual revenue, a 1% improvement in price realization—made possible by better market visibility—translates directly to an additional $100,000 in pure profit.

Beyond the immediate margin gains, there is a significant cost-saving element in the transition to automation. Consider the overhead of a dedicated research team.

The True Cost of Manual Research

* Base Salary (Junior Analyst): $45,000

* Benefits & Overhead (25%): $11,250

* Total Annual Cost: $56,250

* Output: ~40 hours of manual data entry per week with a 3-5% error rate.

* The Botomation Alternative: A custom-built automated system provides 24/7 monitoring with 99.9% accuracy for a fraction of the long-term headcount cost.

Challenges in Manual Pricing Research

The most overlooked risk of manual research is the "detection trap." When a human sits at a desk and refreshes a competitor's page repeatedly, they often trigger basic security protocols that serve them different prices or block them entirely. This leads to inaccurate data collection, which is arguably worse than no data at all. Decisions made on false data can lead to disastrous pricing wars that erode the brand's long-term value.

Scalability is the final impediment for manual methods. As your business grows, your competitor list expands. Manual research is a linear cost; to track more data, you must hire more people. Automation is non-linear. Once our experts at Botomation build the scraping architecture, adding a new competitor or another 10,000 SKUs requires only a minor adjustment to the script, not a new hire. This allows your intelligence to grow at the same pace as your ambitions.

Extract Competitor Pricing Data with Web Scraping

To extract competitor pricing data with web scraping in 2026, you need to go beyond simple HTML parsing. Modern e-commerce sites are built on complex frameworks like React or Next.js, where the price is not actually in the initial code sent to the browser. Instead, the price is fetched via an API call after the page loads. If your scraping tool only looks at the static HTML, it will see a blank space or a "Loading..." message where the price should be.

Reliable extraction requires a multi-layered approach, as detailed in our guide on web scraping for competitor intelligence. Our team focuses on "headless browser" technology, which mimics a real human user. Tools like Playwright or Puppeteer allow the scraper to wait for JavaScript to execute, ensuring the final price—including any discounts or loyalty rewards—is captured accurately. This is the difference between getting a "list price" and getting the "real-world price" that your customers actually see.

Technical Approaches to Price Extraction

The first step is DOM (Document Object Model) parsing for static elements. While this is a traditional method, it remains useful for simpler sites. However, for 2026-standard platforms, we utilize API interception. By monitoring the network traffic of a site, we can often find the direct data feed the website uses to populate its prices. This is significantly faster and more reliable than trying to "scrape" the visual layout of the page.

Handling currency and regional variations is another technical hurdle. A competitor might show $100 in the US but £85 in the UK. A sophisticated scraping system must use localized proxies to ensure it captures the price relevant to your specific market. We also build in normalization logic to convert all scraped data into a single base currency, allowing for apples-to-apples comparisons across a global competitor set.

Choosing the Right Scraping Tools

While open-source libraries like Scrapy or BeautifulSoup are excellent for developers, they often lack the infrastructure needed for enterprise-scale pricing intelligence found in the best competitor analysis tools of 2026. They do not handle proxy rotation, CAPTCHA solving, or browser fingerprinting out of the box. Commercial "off-the-shelf" tools often provide a generic solution that breaks the moment a competitor changes their website layout—which happens on average every 4.2 months for major retailers.

This is why the agency model is superior. When you partner with Botomation, you are not buying a tool that you have to manage yourself. You are hiring a team that builds and maintains a custom solution. If a competitor updates their site at 2:00 AM on a Tuesday, our monitoring systems catch the failure, and our experts fix the script before your team even starts their workday. You get the data; we handle the code.

| Feature | Manual Research | Off-the-Shelf SaaS | Botomation Custom Build |

|---|---|---|---|

| **Update Frequency** | Weekly/Monthly | Daily (Limited) | Real-time / On-demand |

| **Data Accuracy** | Low (Human Error) | Medium (Generic) | High (Custom Tailored) |

| **Maintenance** | None | User-managed | Agency-managed |

| **Anti-Bot Evasion** | None | Basic | Advanced (Residential Proxies) |

| **Scalability** | Expensive (Hires) | Tier-based (Costs add up) | Seamless & Cost-efficient |

Building Automated Pricing Monitoring Systems

Creating a system to extract competitor pricing data with web scraping is only the first half of the battle. The second half is building the architecture that stores, cleans, and alerts you to that data. A pile of raw numbers is useless. You need a system that understands that a $1.00 drop on a $10.00 item is a massive 10% shift, while a $1.00 drop on a $500.00 item is statistical noise.

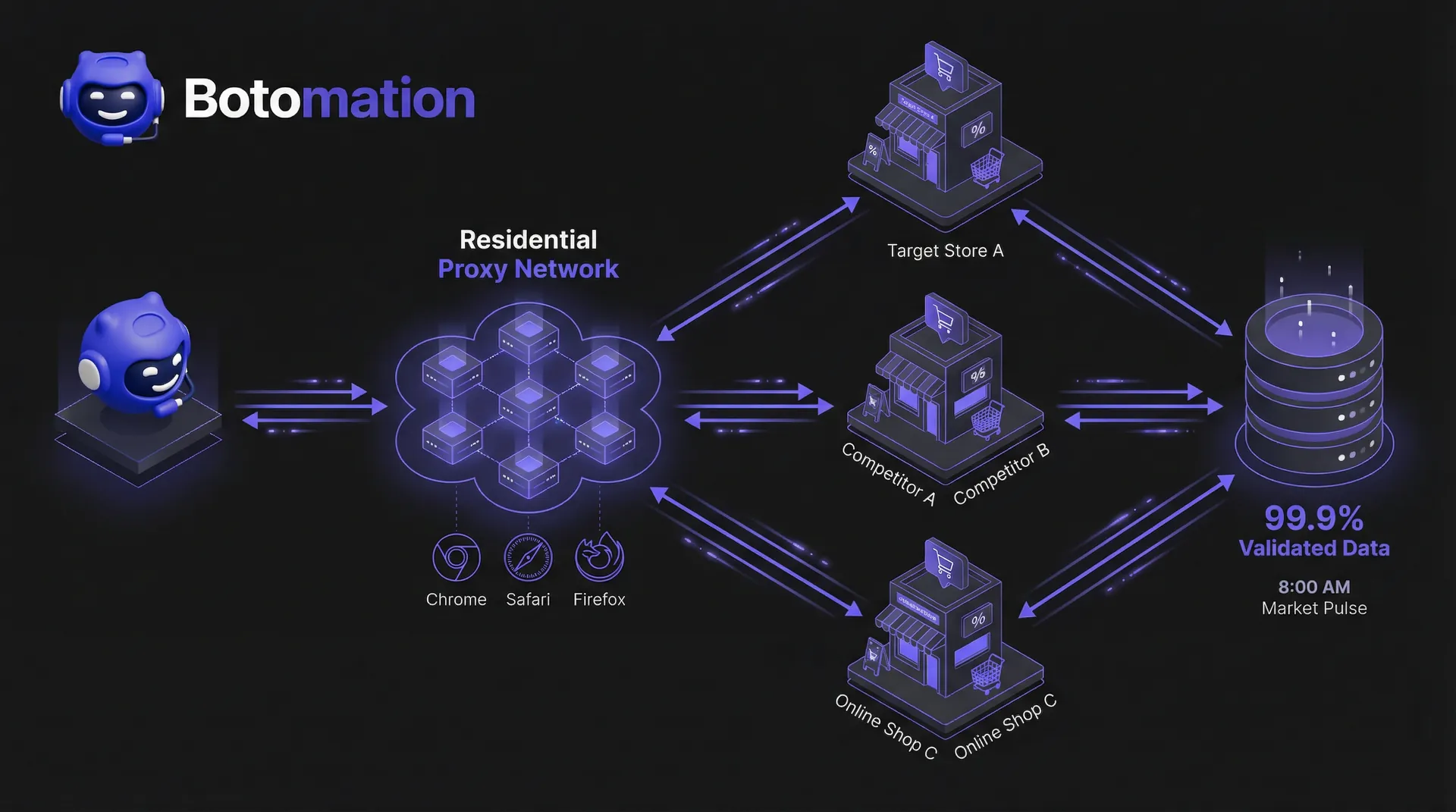

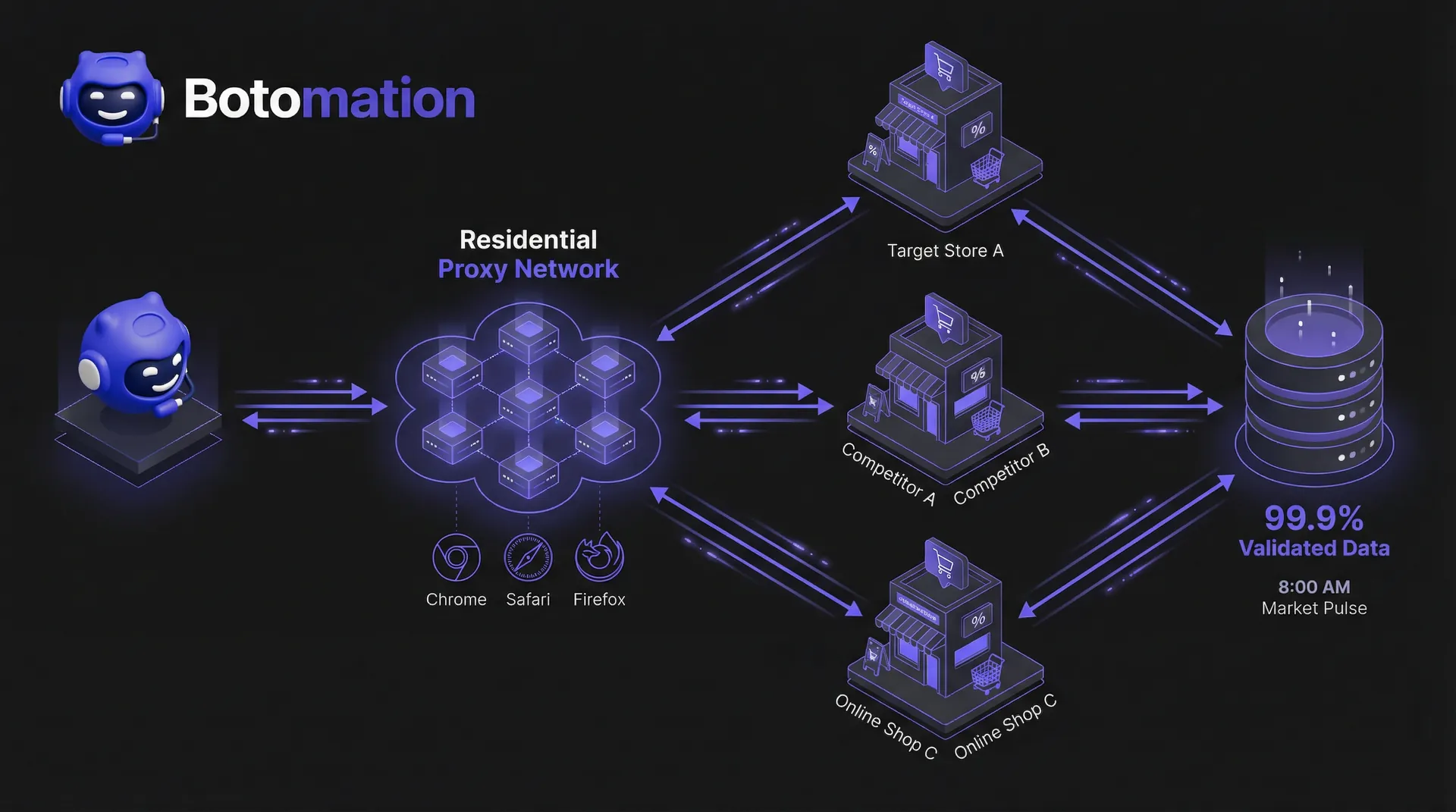

The architecture must be resilient. In 2026, anti-scraping technologies like Cloudflare's latest iterations can detect patterns in milliseconds. A professional system uses a "distributed" approach, spreading requests across thousands of different IP addresses and varying the timing of the scrapes so they do not look like a machine is behind them. This "low and slow" approach ensures long-term access to data without getting your company's IP range blacklisted.

System Architecture for Price Scraping

The core of a robust system is proxy rotation. We utilize residential proxies—IP addresses tied to actual home internet connections—to make our scrapers indistinguishable from genuine shoppers. This is combined with "user-agent" rotation, where the scraper pretends to be using different versions of Chrome, Safari, or Firefox on various operating systems. This level of technical detail is what prevents the "Access Denied" screens that plague amateur scraping attempts.

Error handling is another critical component. Websites go down, products go out of stock, and layouts change. A well-designed system includes "retry logic" that can distinguish between a temporary server hiccup and a permanent change to the site. If a price is missed, the system should automatically attempt a re-scrape using a different configuration. Our goal is 100% data coverage, ensuring no gaps in your historical pricing charts.

Data Management for Pricing History

Storing the data is where the real insights happen. You don't just want today's price; you want the price from every day for the last six months. This allows you to identify "pricing patterns." Does your competitor always drop prices on Friday afternoon? Do they raise them during high-traffic holiday weekends? By maintaining a historical database, you can predict their next move rather than just reacting to it.

Data deduplication is also vital. In e-commerce, the same product might appear under multiple URLs or categories. A sophisticated system uses SKU matching or AI-driven image recognition to ensure that "Product A" on Site 1 is correctly mapped to "Product A" on Site 2, even if the titles are slightly different. This ensures your comparisons are always accurate and your sales team is not misled by mismatched data points.

Advanced Pricing Analysis Techniques

Once you have a clean stream of data, you can move into the world of dynamic pricing algorithms. This is where the modern automated approach truly shines. Instead of a human looking at a report and deciding to change a price, a machine-learning model can suggest the optimal price point based on competitor moves, your current inventory, and even automated industry trend analysis derived from web scraping. In 2026, we are even seeing GPT-5 integrated into these systems to analyze the sentiment of competitor reviews alongside their prices.

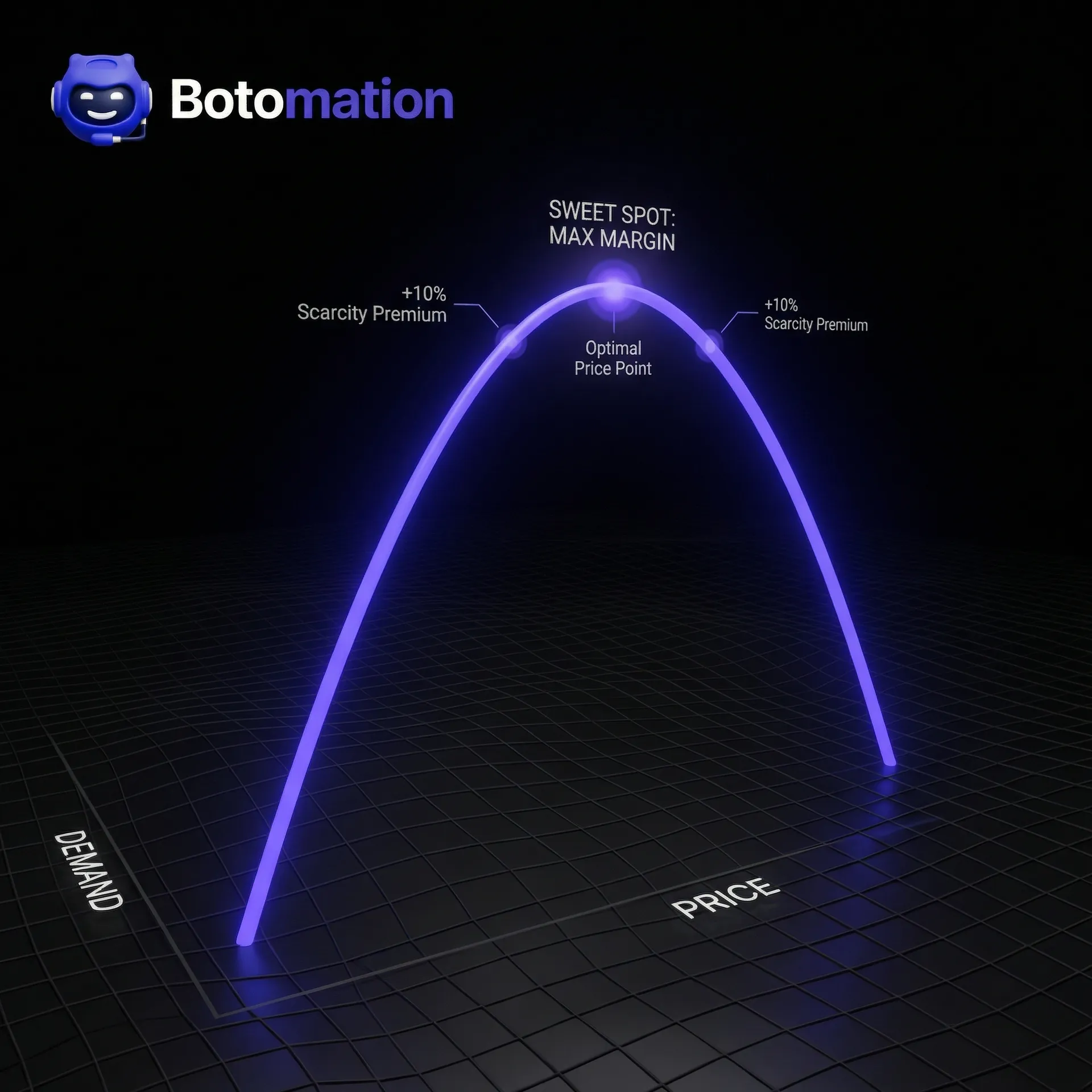

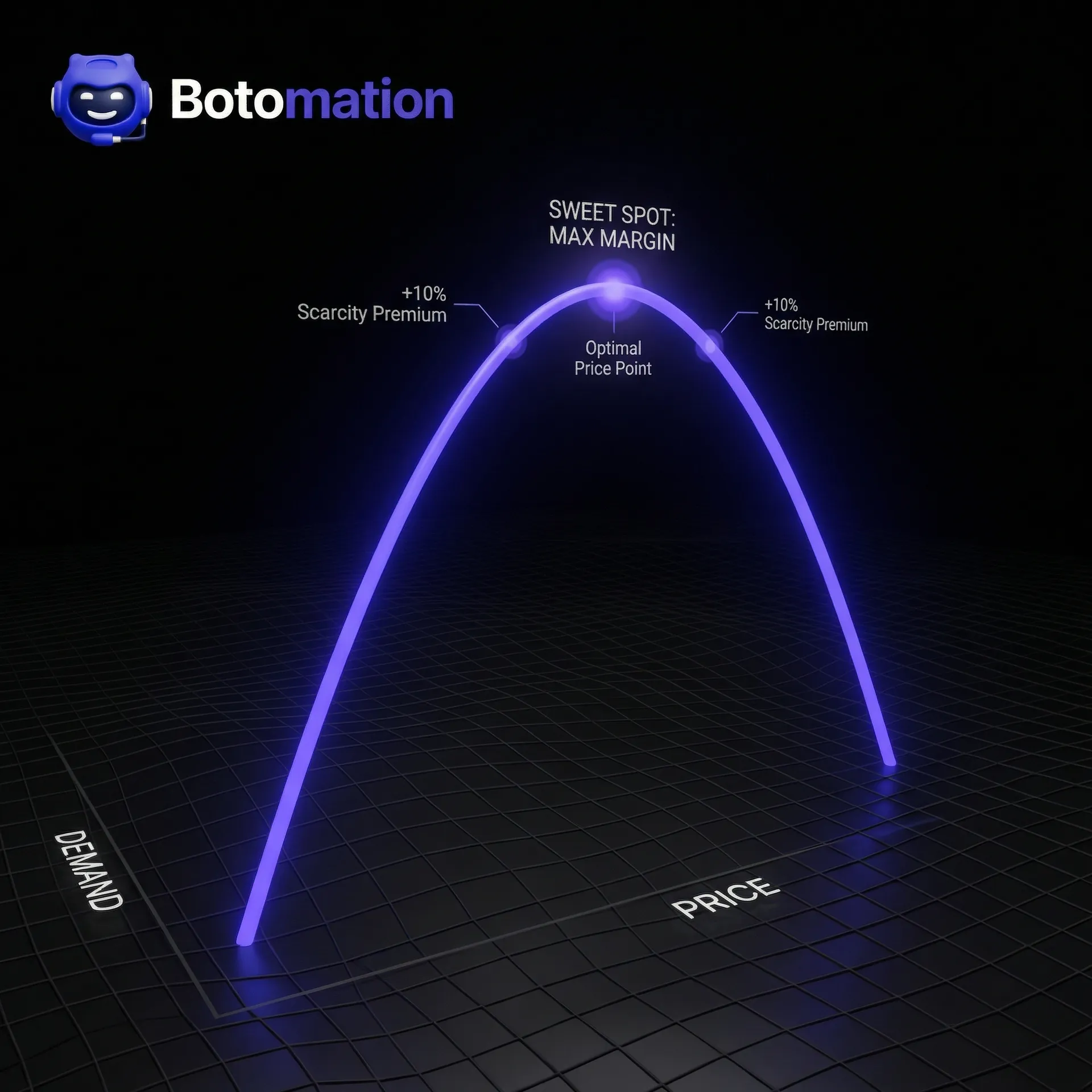

Price elasticity modeling is another advanced technique. By scraping both the price and the "stock status" of a competitor, you can begin to see how their price changes affect their sales volume. If they raise their price by 5% and stay in stock for three weeks, you know the market is willing to pay more. If they drop the price and sell out instantly, you've identified a price floor. This intelligence allows you to find the "sweet spot" where volume and margin are both maximized.

Dynamic Pricing Algorithms

The most successful firms use real-time pricing adjustment systems. These are not just "race to the bottom" bots. A sophisticated algorithm might see that a competitor has run out of stock on a popular item and raise your price by 10% because you are now the only provider in the market. This is how you capture "scarcity margin" that manual teams always miss.

We also implement A/B testing for pricing strategies. You can test a higher price point for 10% of your traffic and compare the conversion rate against the standard price. When combined with competitor data, this tells you exactly how much your brand premium is worth. If your competitor is $10 cheaper but you still win the sale at the higher price, you know your marketing and trust-building efforts are working.

Competitor Behavior Analysis

Predicting a competitor's move is the ultimate competitive advantage. By analyzing months of scraped data, our systems can identify "trigger points." For example, you might find that whenever Competitor X has more than 500 units of a specific SKU, they trigger a 15% discount. Armed with this knowledge, you can plan your own promotions to start two days before theirs, capturing the market demand before they even announce their sale.

This level of analysis turns pricing from a defensive necessity into an offensive weapon. You are no longer wondering why your sales dropped; you are looking at a dashboard that told you three days ago that a drop was coming. This allows your leadership team to make calm, strategic decisions rather than panicked, reactive ones.

Real-World Pricing Extraction Case Studies

The theory is sound, but the results in the field are even more compelling. We have seen industries transformed by the ability to extract competitor pricing data with web scraping. It is not just for the "big players" anymore; mid-market companies are using these custom builds to punch far above their weight class, following proven steps to automate competitor intelligence for B2B sales, and steal market share from complacent incumbents.

Fashion Retail Pricing Success

A mid-sized European fashion retailer was struggling with stagnant margins in a highly competitive "fast fashion" market. They were manually checking five major competitors once a week, but by the time they adjusted their prices, the competitors had often changed theirs again. They were always one step behind, leading to a "clearance" cycle where they had to sell off inventory at a loss because they missed the peak demand window.

Our team at Botomation built a custom scraping engine that monitored 12 competitors across four different regions, updating every four hours. We integrated this data into a dynamic pricing model that factored in their current stock levels. Within six months, the retailer saw a 23% increase in net profit. They weren't just selling more; they were selling at a higher average price because they knew exactly when they could afford to stay high while competitors were out of stock.

Travel Industry Pricing Transformation

The travel industry is perhaps the most price-sensitive market on earth. A large regional travel agency was spending over 100 man-hours per week manually checking hotel and flight prices across various booking engines. The data was often wrong by the time it reached the sales team, leading to lost trust with customers when quoted prices could not be honored.

Step-by-Step: How Botomation Automates Your Pricing Research

1. Discovery: Our experts identify your top 10-20 competitors and the specific data points needed (Price, SKU, Stock, Shipping).

2. Custom Build: We write custom scrapers tailored to the specific architecture of each competitor's site.

3. Proxy Deployment: We set up a rotating residential proxy network to ensure 24/7 access without blocking.

4. Data Cleaning: Our system automatically normalizes currencies, matches SKUs, and removes "junk" data.

5. Integration: The clean data is pushed directly into your CRM, Slack, or a custom dashboard every morning.

6. Maintenance: We monitor the scrapers daily and update the code whenever a competitor changes their site.

By automating this entire flow, the agency reduced manual research effort by 85%. The sales team now receives a "Market Pulse" report every morning at 8:00 AM, showing exactly where they are the cheapest and where they need to add value to justify a higher price. Customer satisfaction scores soared because the quotes were always accurate and competitive.

Legal and Ethical Considerations

A common concern for companies looking to extract competitor pricing data with web scraping is the legality of the practice. The short answer is: in 2026, web scraping is a standard business practice, but how you do it matters. Courts in the US and EU have generally ruled that scraping publicly available data (like prices) does not violate the Computer Fraud and Abuse Act (CFAA) or copyright laws, provided it does not crash the target server or bypass a login "paywall."

However, ethical scraping is about more than just staying out of court. It is about being a responsible "web citizen." This means respecting the target website's resources. If you hit a small competitor's site with 10,000 requests per second, you might crash their server. This is not only unethical; it's a direct way to get your scraping operation shut down.

Legal Framework for Pricing Scraping

The primary legal hurdle is usually a website's "Terms of Service" (ToS). While some sites explicitly forbid scraping in their ToS, these terms are often difficult to enforce against third parties scraping public data. The landmark HiQ vs. LinkedIn case set a strong precedent that public data is, indeed, public. However, our team stays abreast of the latest developments in GDPR and CCPA to ensure that no "Personal Identifiable Information" (PII) is ever collected during a price scrape.

We also navigate the complexities of international law. Retailers operating in multiple jurisdictions need to be aware that scraping laws in Germany might differ from those in the United States. By partnering with an agency like Botomation, you are leveraging our experience in navigating these legal nuances, ensuring your intelligence gathering remains fully compliant and risk-free.

Ethical Scraping Practices

Ethical scraping involves three main pillars: rate limiting, transparency, and respect. Rate limiting means we stagger our requests to mimic human browsing speeds, ensuring we do not put an undue load on the competitor's infrastructure. Transparency involves identifying our scrapers (where appropriate) via user-agent strings that point back to a contact page, although in competitive intelligence, "stealth" is often a requirement for accuracy.

Most importantly, we respect the robots.txt file whenever possible. This is the "instruction manual" for bots on a website. While pricing data is often excluded from these files to prevent competitors from seeing it, we use it as a guide to avoid sensitive areas of a site. By being a "polite" scraper, we ensure the longevity of the data stream. A crashed competitor site provides zero data; a healthy one provides a lifetime of intelligence.

The transition from manual to automated pricing intelligence is no longer a luxury for businesses that want to lead their industry. The data is clear: those who extract competitor pricing data with web scraping are more agile, more profitable, and better positioned to handle the volatility of the 2026 market. The traditional methodology of spreadsheets and manual browsing is a recipe for stagnation and missed opportunities.

Success in this arena requires a combination of technical depth, strategic foresight, and constant maintenance. You don't need another software subscription that your team doesn't have time to learn. You need a partner who can build the engine and keep it running while you focus on the steering. Our team of experts at Botomation specializes in creating these custom, high-performance intelligence tools that turn the vast noise of the internet into a clear, actionable signal for your sales and marketing teams.

Stop letting your competitors dictate the market. By the time you notice their price change manually, the opportunity to react has already passed. It is time to move to a proactive model where you are the one setting the pace.

Frequently Asked Questions

Is web scraping competitor prices legal in 2026?

Yes, scraping publicly available information such as prices, product titles, and stock status is generally legal in most jurisdictions, including the US and EU. Major court cases have established that publicly accessible data is not protected by the same privacy laws as user data. However, it is vital to avoid scraping behind login screens or collecting any personal information.

How often should I scrape my competitors?

The frequency depends on your industry. In high-volatility sectors like electronics or travel, real-time or hourly scraping is necessary. For B2B services or luxury goods, a daily or even weekly update may be sufficient. Our team helps you determine the "goldilocks" frequency that provides maximum insight without unnecessary technical overhead.

Can websites block me from scraping their prices?

Yes, many websites use anti-bot measures like CAPTCHAs and IP blocking, which is why it is essential to track competitor pricing automatically with AI tools designed to handle these barriers. This is why generic tools often fail. Botomation uses advanced techniques like residential proxy rotation and browser fingerprinting to bypass these blocks, ensuring your data flow remains uninterrupted even when competitors try to hide their prices.

Do I need a developer to manage the scraping tools?

If you use a generic SaaS platform, you will likely need internal technical resources to maintain the scrapers. However, when you partner with Botomation, we act as your extended technical team. We build, host, and maintain the tools, providing you with the final data in a format you can use immediately.

How do I know if the scraped data is accurate?

We implement multi-stage validation. Our systems compare scraped data against historical averages and flag any anomalies (like a 90% price drop) for human review. This prevents "bad data" from entering your decision-making process. We also perform regular manual spot-checks to ensure our scrapers are interpreting the website layout correctly.

Ready to automate your growth? Book a call below.

In the competitive landscape of late 2026, the ability to extract competitor pricing data with web scraping has become the baseline for operational survival. Recent industry reports indicate that companies implementing dynamic pricing strategies based on real-time competitor data see a measurable sales growth improvement between 2% and 5%. This is not merely about matching a lower price; it is about comprehending the elasticity of your market and knowing exactly when you can command a premium using automated daily market intelligence. As we move through this year, the global competitive intelligence market is on track to reach a significant $20.2 billion valuation, with pricing analysis standing as the single largest investment area for enterprise-level firms.

The reality of manual research has become a liability for growth-oriented teams. Leading retailers who have transitioned to automated pricing tools have successfully slashed their manual research hours by over 80%. More importantly, they have eliminated the human error inherent in copying data from thousands of browser tabs into aging spreadsheets. When you extract competitor pricing data with web scraping, you are moving from a reactive "guess-and-check" model to a proactive, data-driven engine. This shift allows your sales team to stop acting as data entry clerks and start acting as strategic closers by eliminating manual data entry via automation.

At Botomation, we observe this transition daily. Our team of experts doesn't just provide a dashboard login; we build custom intelligence systems that integrate directly into your existing workflow. The goal is to provide a "morning report" that dictates strategy, rather than a list of numbers that requires further interpretation. In the following sections, we will break down the mechanics of these systems, the legal framework surrounding them, and the massive ROI realized by firms that have abandoned traditional manual monitoring.

Understanding How to Extract Competitor Pricing Data with Web Scraping

Pricing is the most powerful lever a business can pull to influence its bottom line. Yet, most companies treat it as a static element, updated once a quarter or only when a salesperson reports a lost deal. To extract competitor pricing data with web scraping effectively, one must first recognize that pricing is a living organism. Competitors change their rates based on inventory levels, time of day, and even the browsing history of the user. If you are checking prices manually once a week, you are missing 95% of the market story.

The challenge of manual monitoring is its inability to scale. A human can perhaps track 50 products across three competitors with some degree of accuracy. But what happens when you have 5,000 SKUs and twenty competitors? The system inevitably breaks. Data becomes stale before it is even formatted. Furthermore, manual research is prone to "blind spots" where regional pricing or flash sales go unnoticed. Automated extraction solves this by providing a high-frequency, comprehensive view of the entire market landscape simultaneously.

The Business Case for Pricing Intelligence

Suboptimal pricing is a silent profit killer, though custom web development for marketing can provide the infrastructure needed to reclaim these lost margins. If your price is 2% higher than the market equilibrium without a clear value proposition, your conversion rate drops. If it is 2% lower than it needs to be, you are leaving pure margin on the table. When we look at the revenue implications, the numbers are stark. For a company with $10 million in annual revenue, a 1% improvement in price realization—made possible by better market visibility—translates directly to an additional $100,000 in pure profit.

Beyond the immediate margin gains, there is a significant cost-saving element in the transition to automation. Consider the overhead of a dedicated research team.

The True Cost of Manual Research

* Base Salary (Junior Analyst): $45,000

* Benefits & Overhead (25%): $11,250

* Total Annual Cost: $56,250

* Output: ~40 hours of manual data entry per week with a 3-5% error rate.

* The Botomation Alternative: A custom-built automated system provides 24/7 monitoring with 99.9% accuracy for a fraction of the long-term headcount cost.

Challenges in Manual Pricing Research

The most overlooked risk of manual research is the "detection trap." When a human sits at a desk and refreshes a competitor's page repeatedly, they often trigger basic security protocols that serve them different prices or block them entirely. This leads to inaccurate data collection, which is arguably worse than no data at all. Decisions made on false data can lead to disastrous pricing wars that erode the brand's long-term value.

Scalability is the final impediment for manual methods. As your business grows, your competitor list expands. Manual research is a linear cost; to track more data, you must hire more people. Automation is non-linear. Once our experts at Botomation build the scraping architecture, adding a new competitor or another 10,000 SKUs requires only a minor adjustment to the script, not a new hire. This allows your intelligence to grow at the same pace as your ambitions.

Extract Competitor Pricing Data with Web Scraping

To extract competitor pricing data with web scraping in 2026, you need to go beyond simple HTML parsing. Modern e-commerce sites are built on complex frameworks like React or Next.js, where the price is not actually in the initial code sent to the browser. Instead, the price is fetched via an API call after the page loads. If your scraping tool only looks at the static HTML, it will see a blank space or a "Loading..." message where the price should be.

Reliable extraction requires a multi-layered approach, as detailed in our guide on web scraping for competitor intelligence. Our team focuses on "headless browser" technology, which mimics a real human user. Tools like Playwright or Puppeteer allow the scraper to wait for JavaScript to execute, ensuring the final price—including any discounts or loyalty rewards—is captured accurately. This is the difference between getting a "list price" and getting the "real-world price" that your customers actually see.

Technical Approaches to Price Extraction

The first step is DOM (Document Object Model) parsing for static elements. While this is a traditional method, it remains useful for simpler sites. However, for 2026-standard platforms, we utilize API interception. By monitoring the network traffic of a site, we can often find the direct data feed the website uses to populate its prices. This is significantly faster and more reliable than trying to "scrape" the visual layout of the page.

Handling currency and regional variations is another technical hurdle. A competitor might show $100 in the US but £85 in the UK. A sophisticated scraping system must use localized proxies to ensure it captures the price relevant to your specific market. We also build in normalization logic to convert all scraped data into a single base currency, allowing for apples-to-apples comparisons across a global competitor set.

Choosing the Right Scraping Tools

While open-source libraries like Scrapy or BeautifulSoup are excellent for developers, they often lack the infrastructure needed for enterprise-scale pricing intelligence found in the best competitor analysis tools of 2026. They do not handle proxy rotation, CAPTCHA solving, or browser fingerprinting out of the box. Commercial "off-the-shelf" tools often provide a generic solution that breaks the moment a competitor changes their website layout—which happens on average every 4.2 months for major retailers.

This is why the agency model is superior. When you partner with Botomation, you are not buying a tool that you have to manage yourself. You are hiring a team that builds and maintains a custom solution. If a competitor updates their site at 2:00 AM on a Tuesday, our monitoring systems catch the failure, and our experts fix the script before your team even starts their workday. You get the data; we handle the code.

| Feature | Manual Research | Off-the-Shelf SaaS | Botomation Custom Build |

|---|---|---|---|

| **Update Frequency** | Weekly/Monthly | Daily (Limited) | Real-time / On-demand |

| **Data Accuracy** | Low (Human Error) | Medium (Generic) | High (Custom Tailored) |

| **Maintenance** | None | User-managed | Agency-managed |

| **Anti-Bot Evasion** | None | Basic | Advanced (Residential Proxies) |

| **Scalability** | Expensive (Hires) | Tier-based (Costs add up) | Seamless & Cost-efficient |

Building Automated Pricing Monitoring Systems

Creating a system to extract competitor pricing data with web scraping is only the first half of the battle. The second half is building the architecture that stores, cleans, and alerts you to that data. A pile of raw numbers is useless. You need a system that understands that a $1.00 drop on a $10.00 item is a massive 10% shift, while a $1.00 drop on a $500.00 item is statistical noise.

The architecture must be resilient. In 2026, anti-scraping technologies like Cloudflare's latest iterations can detect patterns in milliseconds. A professional system uses a "distributed" approach, spreading requests across thousands of different IP addresses and varying the timing of the scrapes so they do not look like a machine is behind them. This "low and slow" approach ensures long-term access to data without getting your company's IP range blacklisted.

System Architecture for Price Scraping

The core of a robust system is proxy rotation. We utilize residential proxies—IP addresses tied to actual home internet connections—to make our scrapers indistinguishable from genuine shoppers. This is combined with "user-agent" rotation, where the scraper pretends to be using different versions of Chrome, Safari, or Firefox on various operating systems. This level of technical detail is what prevents the "Access Denied" screens that plague amateur scraping attempts.

Error handling is another critical component. Websites go down, products go out of stock, and layouts change. A well-designed system includes "retry logic" that can distinguish between a temporary server hiccup and a permanent change to the site. If a price is missed, the system should automatically attempt a re-scrape using a different configuration. Our goal is 100% data coverage, ensuring no gaps in your historical pricing charts.

Data Management for Pricing History

Storing the data is where the real insights happen. You don't just want today's price; you want the price from every day for the last six months. This allows you to identify "pricing patterns." Does your competitor always drop prices on Friday afternoon? Do they raise them during high-traffic holiday weekends? By maintaining a historical database, you can predict their next move rather than just reacting to it.

Data deduplication is also vital. In e-commerce, the same product might appear under multiple URLs or categories. A sophisticated system uses SKU matching or AI-driven image recognition to ensure that "Product A" on Site 1 is correctly mapped to "Product A" on Site 2, even if the titles are slightly different. This ensures your comparisons are always accurate and your sales team is not misled by mismatched data points.

Advanced Pricing Analysis Techniques

Once you have a clean stream of data, you can move into the world of dynamic pricing algorithms. This is where the modern automated approach truly shines. Instead of a human looking at a report and deciding to change a price, a machine-learning model can suggest the optimal price point based on competitor moves, your current inventory, and even automated industry trend analysis derived from web scraping. In 2026, we are even seeing GPT-5 integrated into these systems to analyze the sentiment of competitor reviews alongside their prices.

Price elasticity modeling is another advanced technique. By scraping both the price and the "stock status" of a competitor, you can begin to see how their price changes affect their sales volume. If they raise their price by 5% and stay in stock for three weeks, you know the market is willing to pay more. If they drop the price and sell out instantly, you've identified a price floor. This intelligence allows you to find the "sweet spot" where volume and margin are both maximized.

Dynamic Pricing Algorithms

The most successful firms use real-time pricing adjustment systems. These are not just "race to the bottom" bots. A sophisticated algorithm might see that a competitor has run out of stock on a popular item and raise your price by 10% because you are now the only provider in the market. This is how you capture "scarcity margin" that manual teams always miss.

We also implement A/B testing for pricing strategies. You can test a higher price point for 10% of your traffic and compare the conversion rate against the standard price. When combined with competitor data, this tells you exactly how much your brand premium is worth. If your competitor is $10 cheaper but you still win the sale at the higher price, you know your marketing and trust-building efforts are working.

Competitor Behavior Analysis

Predicting a competitor's move is the ultimate competitive advantage. By analyzing months of scraped data, our systems can identify "trigger points." For example, you might find that whenever Competitor X has more than 500 units of a specific SKU, they trigger a 15% discount. Armed with this knowledge, you can plan your own promotions to start two days before theirs, capturing the market demand before they even announce their sale.

This level of analysis turns pricing from a defensive necessity into an offensive weapon. You are no longer wondering why your sales dropped; you are looking at a dashboard that told you three days ago that a drop was coming. This allows your leadership team to make calm, strategic decisions rather than panicked, reactive ones.

Real-World Pricing Extraction Case Studies

The theory is sound, but the results in the field are even more compelling. We have seen industries transformed by the ability to extract competitor pricing data with web scraping. It is not just for the "big players" anymore; mid-market companies are using these custom builds to punch far above their weight class, following proven steps to automate competitor intelligence for B2B sales, and steal market share from complacent incumbents.

Fashion Retail Pricing Success

A mid-sized European fashion retailer was struggling with stagnant margins in a highly competitive "fast fashion" market. They were manually checking five major competitors once a week, but by the time they adjusted their prices, the competitors had often changed theirs again. They were always one step behind, leading to a "clearance" cycle where they had to sell off inventory at a loss because they missed the peak demand window.

Our team at Botomation built a custom scraping engine that monitored 12 competitors across four different regions, updating every four hours. We integrated this data into a dynamic pricing model that factored in their current stock levels. Within six months, the retailer saw a 23% increase in net profit. They weren't just selling more; they were selling at a higher average price because they knew exactly when they could afford to stay high while competitors were out of stock.

Travel Industry Pricing Transformation

The travel industry is perhaps the most price-sensitive market on earth. A large regional travel agency was spending over 100 man-hours per week manually checking hotel and flight prices across various booking engines. The data was often wrong by the time it reached the sales team, leading to lost trust with customers when quoted prices could not be honored.

Step-by-Step: How Botomation Automates Your Pricing Research

1. Discovery: Our experts identify your top 10-20 competitors and the specific data points needed (Price, SKU, Stock, Shipping).

2. Custom Build: We write custom scrapers tailored to the specific architecture of each competitor's site.

3. Proxy Deployment: We set up a rotating residential proxy network to ensure 24/7 access without blocking.

4. Data Cleaning: Our system automatically normalizes currencies, matches SKUs, and removes "junk" data.

5. Integration: The clean data is pushed directly into your CRM, Slack, or a custom dashboard every morning.

6. Maintenance: We monitor the scrapers daily and update the code whenever a competitor changes their site.

By automating this entire flow, the agency reduced manual research effort by 85%. The sales team now receives a "Market Pulse" report every morning at 8:00 AM, showing exactly where they are the cheapest and where they need to add value to justify a higher price. Customer satisfaction scores soared because the quotes were always accurate and competitive.

Legal and Ethical Considerations

A common concern for companies looking to extract competitor pricing data with web scraping is the legality of the practice. The short answer is: in 2026, web scraping is a standard business practice, but how you do it matters. Courts in the US and EU have generally ruled that scraping publicly available data (like prices) does not violate the Computer Fraud and Abuse Act (CFAA) or copyright laws, provided it does not crash the target server or bypass a login "paywall."

However, ethical scraping is about more than just staying out of court. It is about being a responsible "web citizen." This means respecting the target website's resources. If you hit a small competitor's site with 10,000 requests per second, you might crash their server. This is not only unethical; it's a direct way to get your scraping operation shut down.

Legal Framework for Pricing Scraping

The primary legal hurdle is usually a website's "Terms of Service" (ToS). While some sites explicitly forbid scraping in their ToS, these terms are often difficult to enforce against third parties scraping public data. The landmark HiQ vs. LinkedIn case set a strong precedent that public data is, indeed, public. However, our team stays abreast of the latest developments in GDPR and CCPA to ensure that no "Personal Identifiable Information" (PII) is ever collected during a price scrape.

We also navigate the complexities of international law. Retailers operating in multiple jurisdictions need to be aware that scraping laws in Germany might differ from those in the United States. By partnering with an agency like Botomation, you are leveraging our experience in navigating these legal nuances, ensuring your intelligence gathering remains fully compliant and risk-free.

Ethical Scraping Practices

Ethical scraping involves three main pillars: rate limiting, transparency, and respect. Rate limiting means we stagger our requests to mimic human browsing speeds, ensuring we do not put an undue load on the competitor's infrastructure. Transparency involves identifying our scrapers (where appropriate) via user-agent strings that point back to a contact page, although in competitive intelligence, "stealth" is often a requirement for accuracy.

Most importantly, we respect the robots.txt file whenever possible. This is the "instruction manual" for bots on a website. While pricing data is often excluded from these files to prevent competitors from seeing it, we use it as a guide to avoid sensitive areas of a site. By being a "polite" scraper, we ensure the longevity of the data stream. A crashed competitor site provides zero data; a healthy one provides a lifetime of intelligence.

The transition from manual to automated pricing intelligence is no longer a luxury for businesses that want to lead their industry. The data is clear: those who extract competitor pricing data with web scraping are more agile, more profitable, and better positioned to handle the volatility of the 2026 market. The traditional methodology of spreadsheets and manual browsing is a recipe for stagnation and missed opportunities.

Success in this arena requires a combination of technical depth, strategic foresight, and constant maintenance. You don't need another software subscription that your team doesn't have time to learn. You need a partner who can build the engine and keep it running while you focus on the steering. Our team of experts at Botomation specializes in creating these custom, high-performance intelligence tools that turn the vast noise of the internet into a clear, actionable signal for your sales and marketing teams.

Stop letting your competitors dictate the market. By the time you notice their price change manually, the opportunity to react has already passed. It is time to move to a proactive model where you are the one setting the pace.

Frequently Asked Questions

Is web scraping competitor prices legal in 2026?

Yes, scraping publicly available information such as prices, product titles, and stock status is generally legal in most jurisdictions, including the US and EU. Major court cases have established that publicly accessible data is not protected by the same privacy laws as user data. However, it is vital to avoid scraping behind login screens or collecting any personal information.

How often should I scrape my competitors?

The frequency depends on your industry. In high-volatility sectors like electronics or travel, real-time or hourly scraping is necessary. For B2B services or luxury goods, a daily or even weekly update may be sufficient. Our team helps you determine the "goldilocks" frequency that provides maximum insight without unnecessary technical overhead.

Can websites block me from scraping their prices?

Yes, many websites use anti-bot measures like CAPTCHAs and IP blocking, which is why it is essential to track competitor pricing automatically with AI tools designed to handle these barriers. This is why generic tools often fail. Botomation uses advanced techniques like residential proxy rotation and browser fingerprinting to bypass these blocks, ensuring your data flow remains uninterrupted even when competitors try to hide their prices.

Do I need a developer to manage the scraping tools?

If you use a generic SaaS platform, you will likely need internal technical resources to maintain the scrapers. However, when you partner with Botomation, we act as your extended technical team. We build, host, and maintain the tools, providing you with the final data in a format you can use immediately.

How do I know if the scraped data is accurate?

We implement multi-stage validation. Our systems compare scraped data against historical averages and flag any anomalies (like a 90% price drop) for human review. This prevents "bad data" from entering your decision-making process. We also perform regular manual spot-checks to ensure our scrapers are interpreting the website layout correctly.

Ready to automate your growth? Book a call below.

Get Started

Book a FREE Consultation Right NOW!

Schedule a Call with Our Team To Make Your Business More Efficient with AI Instantly.

Read More

Extract Competitor Pricing Data with Web Scraping | 2026

Extract competitor pricing data with web scraping for market intelligence. Automate price monitoring, lead gen, and dynamic pricing strategies now.

Automated Lead Filtering Based on Company Size - 2026 Guide

Learn how WhatsApp AI slashes support costs for e-commerce & SaaS. Proven strategies to boost sales, recover carts, and scale 24/7 service.