AI Voice Cloning for Video Localization - Save 90% in 2026

Feb 17, 2026

YouTube

AI Automation

Video Localization

YouTube

AI Automation

Video Localization

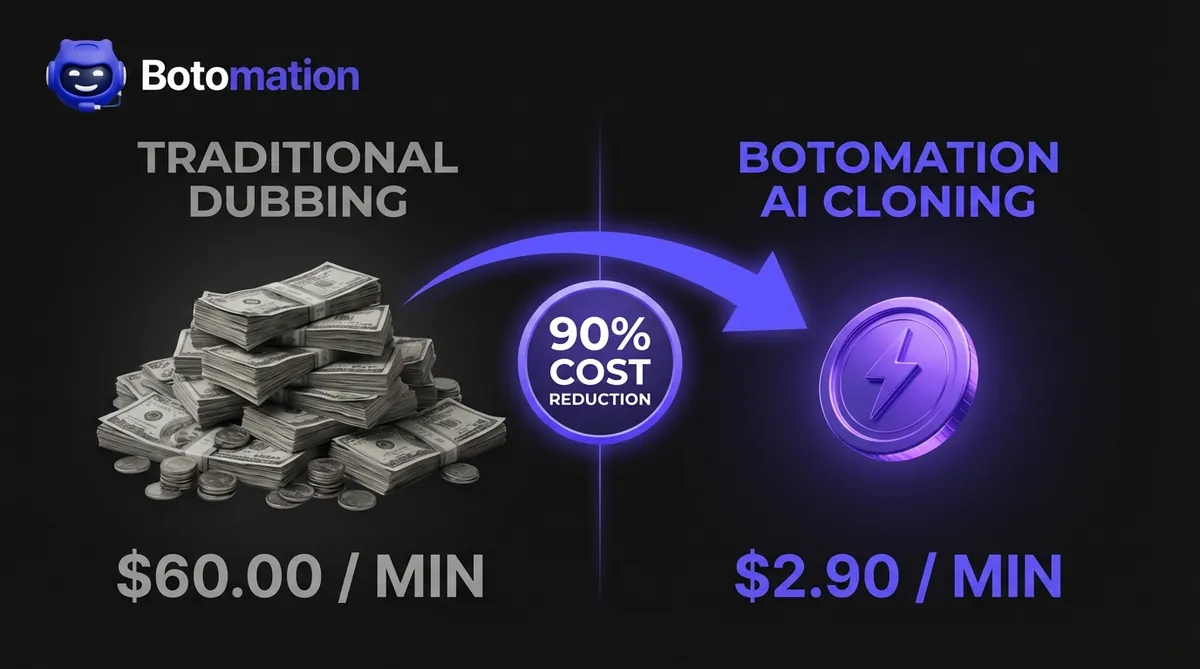

Expanding a YouTube channel beyond English-speaking borders was once a luxury reserved for the top 0.1% of creators. Historically, reaching audiences in Brazil, Germany, or Japan meant facing a significant financial challenge: professional dubbing costs between $30 and $60 per minute of finished video. However, the emergence of AI voice cloning for video localization has fundamentally changed the landscape for multilingual content creation as of January 2026. For a standard twenty-minute educational video, traditional costs required an investment of $1,200 per language—often before a creator knew if the content would resonate. These prohibitive costs acted as a massive barrier to entry, keeping high-quality information locked behind language walls and preventing talented creators from achieving true global scale.

The landscape has shifted dramatically. AI voice cloning technology has matured to a point where it no longer sounds like a robotic approximation of human speech, but rather a perfect digital twin of the original creator. By utilizing sophisticated neural networks, creators can now reduce their localization expenses by up to 90% while maintaining their unique vocal identity. Instead of hiring a roster of voice actors who sound nothing like the original speaker, our team at Botomation uses advanced systems to clone your specific tone, cadence, and emotional inflection. This ensures that your brand remains consistent whether your viewer is in New York or New Delhi.

With modern solutions like HeyGen now offering support for over 175 languages at a price point of roughly $2.90 to $4.90 per minute, the economics of content creation have changed forever. A creator can now localize an entire month of content for the cost of a single traditionally dubbed video. This guide explores the technical mechanics, financial benefits, and strategic implementation of AI voice cloning for video localization for creators ready to stop leaving millions of views on the table. The era of the "English-only" creator is over, and the era of the global media house has begun, allowing creators to expand YouTube globally with voice cloning more efficiently than ever before.

What is AI Voice Cloning for Video Localization?

AI voice cloning for video localization is not merely a refined version of text-to-speech; it represents a fundamental shift in how we process and replicate human communication. At its core, this technology involves deep learning models that analyze the unique "fingerprint" of a human voice. This includes the fundamental frequency, specific vowel pronunciations, and the rhythmic prosody that makes a voice recognizable. In 2026, these models have reached a level of sophistication where they can translate a script into 175+ languages (via HeyGen) or 140+ languages (via Synthesia) while retaining the original speaker's essence.

The primary benefit of this approach is the preservation of brand equity and maintaining brand voice consistency in multilingual video content. When a viewer subscribes to a channel, they are often connecting with the personality of the creator. If that creator is replaced by a generic voice actor in the translated version, that emotional connection is frequently severed. Our experts at Botomation focus on maintaining this connection by ensuring you maintain voice personality in video localization, carrying the same excitement, gravity, or humor as the original recording. This allows for a seamless transition across cultures, where the only thing that changes is the language, not the character.

How Does AI Dubbing Technology Work?

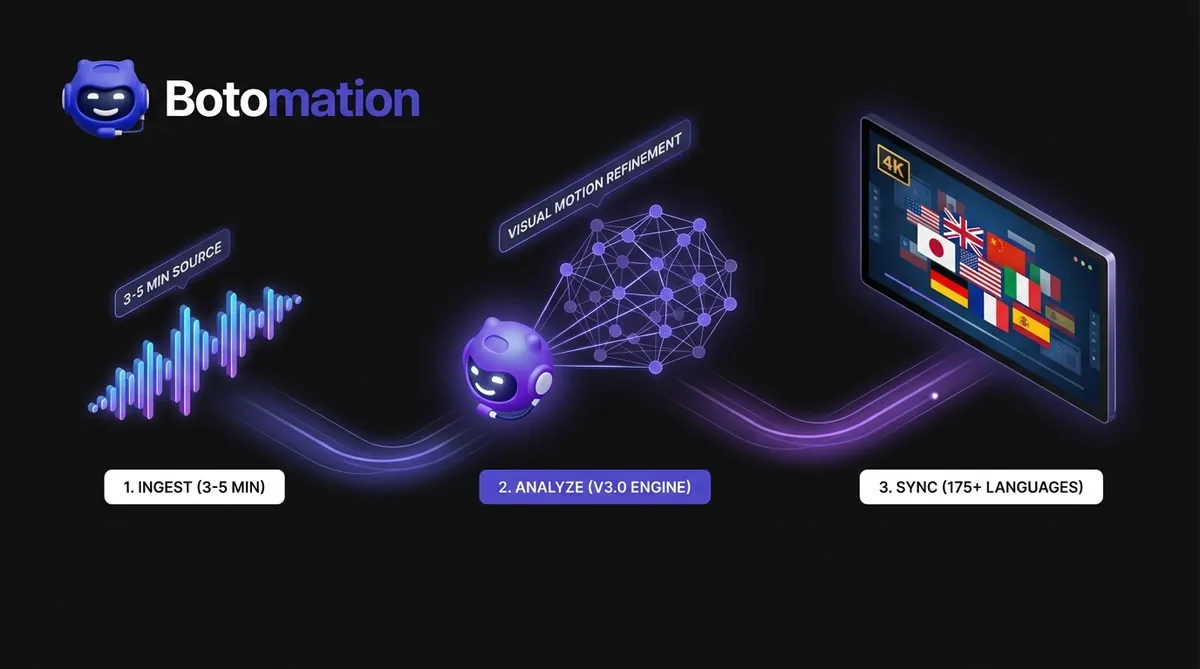

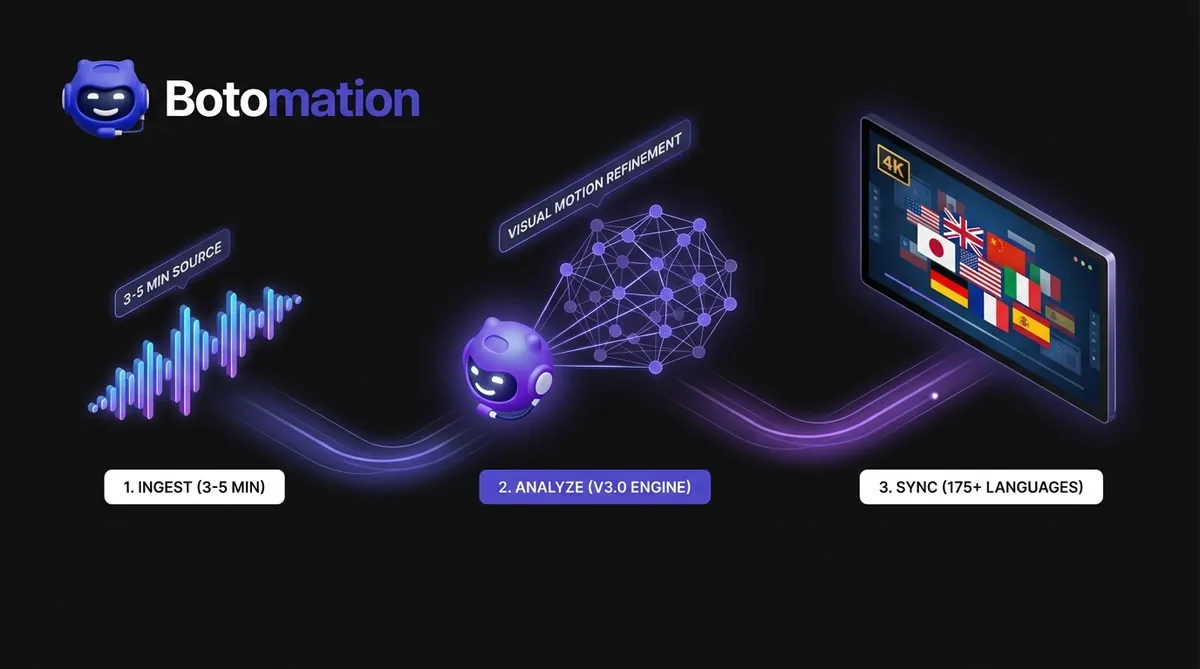

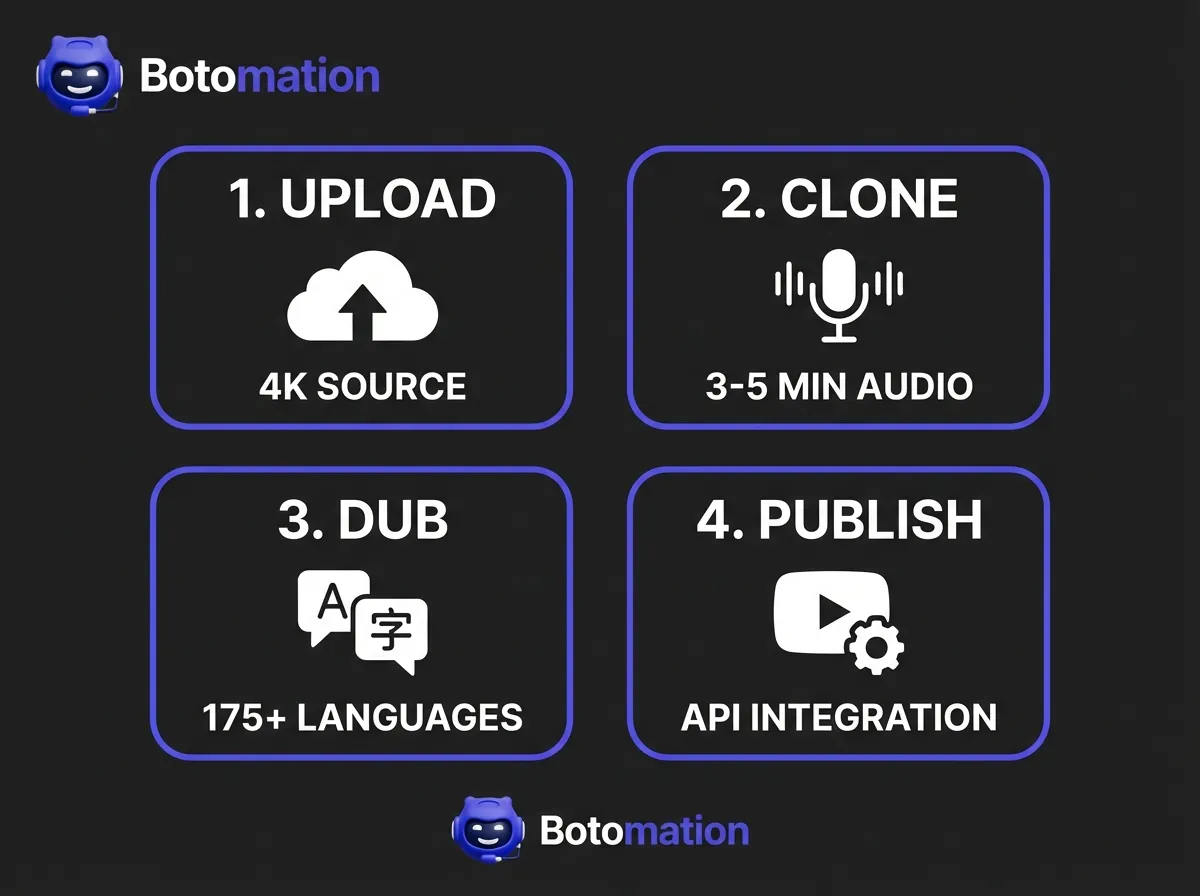

The process of creating a high-fidelity voice clone begins with data ingestion. We typically require three to five minutes of clean, high-quality source audio from the creator to serve as the training set for the neural network. During this phase, the AI identifies thousands of distinct vocal markers, ranging from subtle breathiness to the specific way a creator might trail off at the end of a sentence. Unlike the rigid algorithms of the past, 2026-era machine learning uses "zero-shot" and "few-shot" learning to adapt to new languages without requiring the creator to speak them.

Once the voice profile is established, the system integrates with advanced translation models, often powered by the latest iterations of GPT-5 or specialized linguistic engines. These engines don't just translate word-for-word; they adapt idioms and cultural nuances to ensure the message remains relevant. The final and most critical step involves lip-syncing. Advanced generative models adjust the visual movements of the speaker's mouth in the original video to match the phonemes of the new language. This eliminates the "uncanny valley" effect, making the localized version look as if it were originally filmed in the target language.

What are the Technical Updates in AI Voice Cloning for 2026?

The technological leaps seen in the last twelve months are staggering. HeyGen v3.0, released in late 2024, introduced a proprietary "Visual Motion Refinement" engine that reduced visual artifacts—those minor glitches around the mouth—by over 60%. This means that even in high-definition 4K videos, the lip-syncing remains crisp and believable. Furthermore, processing speed has increased exponentially. While traditional dubbing could take weeks of back-and-forth with a studio, modern AI workflows allow for processing at 1-5x the video length. A ten-minute video can often be fully dubbed and lip-synced in under an hour.

Privacy and security have also become central themes in the 2026 tech stack. Leading platforms have implemented end-to-end encryption for voice samples to prevent unauthorized usage. For creators working in the European Union, GDPR compliance is now a standard feature, ensuring that biometric data is handled with the highest level of care. Integration has also improved, with direct APIs now connecting these AI engines to YouTube Studio. This allows our team at Botomation to manage a creator's global presence with surgical precision, pushing localized versions to regional channels with the click of a button.

How Do Costs Compare: Traditional Dubbing vs. AI Solutions?

To understand why AI voice cloning for video localization is the superior choice for growth-minded creators, one must analyze the data. Traditional dubbing is a labor-intensive process involving multiple stakeholders: translators, voice actors, studio engineers, and project managers. Each layer adds a significant markup. When factoring in time spent on scheduling and revisions, the traditional method of localizing content becomes a massive anchor on a creator's budget.

In contrast, the automated solutions offered by Botomation leverage technology to strip away these overhead costs. By replacing a manual studio setup with a highly efficient AI pipeline, we can deliver localized content at a fraction of the price. The Polilingua 2024 report highlighted a case where a small business reduced its localization costs from $15,000 to just $1,500 by switching to AI-driven tools. This 90% reduction is now a standard reality for creators embracing modern technology.

Why are Traditional Dubbing Expenses So High?

When hiring a professional voice actor for YouTube video dubbing, you are paying for their expertise, agent fees, and usage rights. Most professional talent will charge between $300 and $400 for every 1,000 words, which equates to roughly six to eight minutes of video content. If your video is twenty minutes long, you are looking at $800 to $1,000 just for the voice talent in a single language.

The costs continue to accumulate when considering technical requirements. Studio rental fees for high-quality environments can range from $200 to $500 per day. Once recorded, an editor must manually sync the audio track to the video, which often adds another 50% to 100% to the total project cost. Expanding into five languages multiplies these expenses by five. For most independent creators, this financial burden makes international expansion nearly impossible through traditional means.

What is the ROI of AI Video Localization?

The pricing model for AI voice cloning for video localization is built for scale. Instead of per-project fees that escalate with every language, platforms like HeyGen offer subscription-based models starting as low as $29 per month. Even at the enterprise level, the cost per minute remains incredibly low—often between $2.90 and $4.90. This allows creators to experiment with new markets like Brazil or Indonesia with virtually zero financial risk. If a specific language doesn't perform well, the loss is negligible compared to traditional methods, especially when testing international market demand with video pilots before a full rollout.

The return on investment (ROI) is clear when examining the break-even point. Based on current YouTube ad rates and average international CPMs, most creators can break even on their localization costs after localizing just five to ten videos. A YouTube channel with 100,000 subscribers recently reported a 340% increase in international views after implementing an AI dubbing strategy. By reaching previously inaccessible audiences, they increased YouTube revenue via international expansion with AI dubbing, unlocking new revenue streams from AdSense and sponsorships.

| Feature | Traditional Dubbing | AI Voice Cloning (Botomation) |

|---|---|---|

| **Cost per Minute** | $30 - $60 | $2.90 - $4.90 |

| **Turnaround Time** | 2 - 4 Weeks | 1 - 2 Hours |

| **Voice Consistency** | Different actor per language | Your actual voice in every language |

| **Scalability** | Linear cost increase | Exponential reach for flat fee |

| **Lip-Sync Quality** | Manual (Expensive) | AI-Automated (Included) |

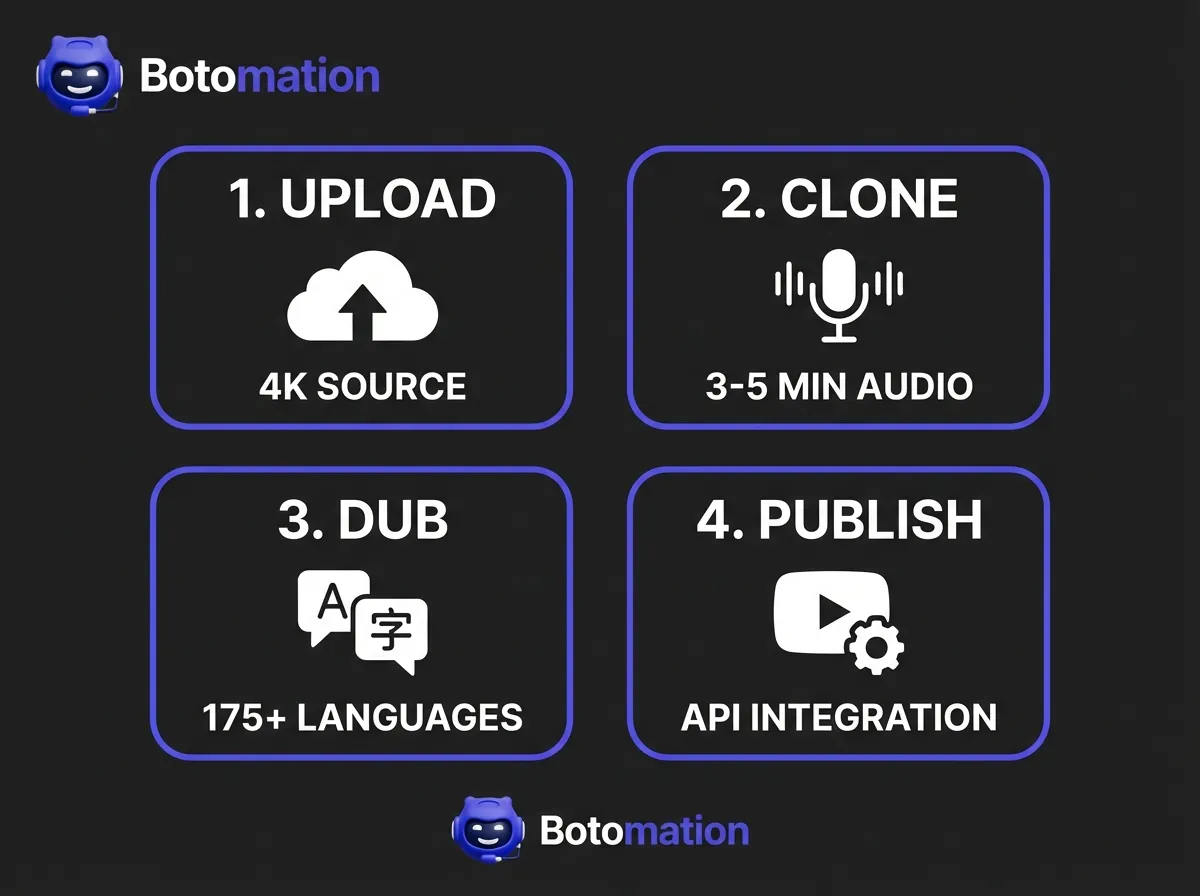

How to Start Multilingual Content Creation: A Step-by-Step Guide

Navigating AI localization is remarkably straightforward when broken down into manageable phases. The goal is to move from a single-language video to a multi-language global asset without sacrificing quality by following 7 steps to automate YouTube international expansion with voice cloning. While the technology handles the heavy lifting, the human element remains vital. Partnering with an agency like Botomation provides the greatest value here, as we ensure every step is optimized for the best possible output.

The journey begins with your source material. High-quality clones cannot be built on a foundation of poor audio. Once the technical foundation is set, the focus shifts to linguistic accuracy and visual harmony. By following a structured workflow, you can transform your channel into a global powerhouse in a matter of days.

How Should You Prepare Source Material for AI?

The first step is ensuring your source audio is pristine. AI models are highly sensitive; if your original recording has background hiss or echo, those artifacts may be amplified in the cloned version. We recommend using a high-quality cardioid or condenser microphone in a treated room. You should also provide a clear, timestamped transcript. While AI can auto-generate transcripts, having a verified text base ensures the translation engine doesn't misinterpret technical jargon or brand names.

Selecting the right training data is equally important. You don't need to upload your entire library; instead, choose three to five minutes of your best speaking segments where your energy is high and articulation is clear. This "golden sample" allows the AI to capture the essence of your personality. Once the voice is trained, the next phase is translation. Achieving cost-effective video translation often involves using AI tools, but our team at Botomation often performs a "cultural sanity check" to ensure that metaphors or references remain appropriate in the target culture.

How Do You Use HeyGen for YouTube Video Dubbing?

Once preparation is complete, the dubbing process occurs within specialized AI voice dubbing tools for multilingual YouTube videos. HeyGen has become the industry standard for creators in 2026 due to its massive language library and intuitive interface. After uploading your video and the translated script, you simply select your cloned voice profile. The system then generates the new audio track and begins the task of re-animating the speaker's mouth movements to match the new phonemes.

"The magic of AI localization isn't just in the translation; it's in the preservation of the creator's identity across borders." — Senior Consultant, Botomation.

After the AI has finished, a thorough review is necessary. You should look for lip-sync accuracy and "emotional matching." Does the voice sound excited when the creator is gesturing? At Botomation, our experts fine-tune these parameters to ensure the final product feels natural. Once finalized, the video can be uploaded to regional YouTube channels or added as an additional audio track on the primary video using YouTube’s multi-language audio feature.

What Real-World Results are Creators Seeing?

The theoretical benefits of AI voice cloning for video localization are impressive, but the real-world results are even more compelling. Across various sectors—from entertainment to education—the adoption of AI localization is fundamentally changing content consumption. Major players like Netflix have already integrated these technologies into workflows for non-primary markets, reporting cost reductions of up to 80% while maintaining high quality.

For the independent YouTube creator, these tools are a "force multiplier." They allow a single person or small team to compete with global media conglomerates. We have seen creators move from being local celebrities to becoming household names internationally, simply by making their content accessible to those who don't speak their native tongue.

Which YouTube Creators Have Successfully Scaled Globally?

One striking example comes from a gaming YouTuber with two million subscribers who focused primarily on the English-speaking market. By partnering with Botomation to localize their content into fifteen languages—including Spanish, Portuguese, and Japanese—they saw an influx of 400,000 new international subscribers in six months. The cost of this expansion was less than $1,000 per month, a figure quickly eclipsed by the surge in international ad revenue.

Educational channels have seen even more dramatic results, often utilizing voice cloning for educational YouTube videos to scale their reach without expensive re-recording sessions. A channel focused on STEM subjects increased its total watch time by 250% after dubbing its library into Spanish. Because educational content is often search-driven, having localized titles, descriptions, and audio tracks allowed them to dominate search results in Latin America. Even smaller creators are seeing success; one creator invested just $50 a month in AI dubbing for five languages and saw their international audience grow from 5% of their total views to over 30% in a single quarter.

How Does This Benefit Small Businesses and Educators?

Beyond entertainment, small businesses and e-learning companies are reaping massive rewards. A European e-learning firm specializing in technical certifications struggled to break into the Asian market due to the high cost of manual dubbing. After learning how to localize online courses using AI voice, they localized their entire course catalog into eight languages. The result was a 180% increase in course completion rates, as students were finally able to learn in their native language.

Corporate training has also been revolutionized. Large companies with global workforces often struggle to maintain consistent training standards across different regions. By using AI voice cloning for video localization, they ensure that a message from the CEO or a safety briefing sounds exactly the same in every office worldwide. This has led to a 90% increase in employee engagement in non-English speaking regions. Delivering a message in the "original voice" of the leader, but in the language of the employee, creates a sense of unity that text-based translations cannot match.

Which AI Video Localization Tool is Right for You?

With many options available in 2026, selecting the right tool is a critical task. The "best" tool depends on your specific goals, budget, and content volume. While some platforms excel at high-end cinematic lip-syncing, others are better suited for quick marketing clips. It is important to look beyond marketing claims and focus on technical benchmarks that impact the viewer experience.

At Botomation, we constantly test these platforms to ensure our clients use the most efficient tech stack. We evaluate factors like "naturalness" scores, processing latency, and the breadth of language support. While a tool might offer 100 languages, if the quality of the Polish or Thai dub is subpar, it can damage your brand's reputation in those regions.

How Do HeyGen, Synthesia, and GeckoDub Compare?

HeyGen remains the frontrunner for most YouTube creators because of its specialized focus on video-to-video translation and lip-syncing. With support for over 175 languages and a 90% cost reduction compared to traditional methods, it is the most versatile option for large-scale content libraries. Its v3.0 update has set a new bar for visual fidelity that competitors are still working to meet.

Synthesia, by contrast, is heavily focused on the enterprise market. While it offers 140+ languages and strong API integrations, it is often used for creating videos from scratch using digital avatars rather than localizing existing footage. GeckoDub has carved out a niche in marketing, offering incredible processing speeds—often finishing a video in the time it takes to watch it. For creators on a tight budget, RecCloud offers a basic service for around 60 languages, though the lip-syncing is noticeably less sophisticated than premium options.

Which Quality Metrics Matter Most for Your Channel?

When evaluating these platforms, we use several key performance indicators (KPIs). The first is the "Naturalness Score." In recent 2024 surveys, HeyGen scored a 4.8 out of 5 for vocal naturalness, while budget competitors often hovered around 4.2. This small difference is the gap between a viewer staying for the whole video or clicking away because the voice sounds artificial.

Processing speed is another critical factor. Advanced platforms can now handle a ten-minute video in roughly 30 to 60 minutes. Compare this to the two to three days required for even the fastest traditional studios, and the efficiency gain is clear. Finally, we look at lip-sync accuracy. Top-tier platforms maintain a 95% accuracy rate, meaning mouth movements are virtually indistinguishable from a native speaker. This high level of quality is why HeyGen reports a 92% retention rate among professional content creators.

Frequently Asked Questions

Does AI voice cloning sound robotic?

Not in 2026. Modern neural networks capture the subtle nuances of human speech, including breathing, emotional tone, and specific accents. When properly trained with high-quality source audio, the resulting clone is virtually indistinguishable from the original speaker to the average listener.

Is it difficult to set up the lip-syncing?

If you are doing it yourself, there is a learning curve to ensure visual artifacts are minimized. However, when you partner with Botomation, our experts handle the technical alignment. The AI does the heavy lifting, and we provide the quality control to ensure the mouth movements look natural in every frame.

How much can I actually save compared to a studio?

The savings are typically around 90%. While a professional studio might charge $1,200 for a twenty-minute video in one language, an AI-driven approach through our agency costs a fraction of that, allowing you to localize into ten languages for the price of one traditional dub.

Can I use my own voice for the translations?

Yes, that is the primary advantage of voice cloning. The technology takes your unique vocal characteristics and applies them to the translated text. Your audience in France or Germany will hear your voice speaking their language, which is essential for maintaining brand consistency.

Is this technology legal and ethical?

Yes, provided you have the rights to the voice being cloned. At Botomation, we only work with creators who provide their own voice samples. We also prioritize platforms that use high-level encryption and follow strict data privacy regulations like GDPR to protect your biometric data.

The decision to go global is no longer a question of "if" but "when." The barriers that once kept creators confined to their native languages have been dismantled by the power of AI voice cloning for video localization. You can now reach billions of potential viewers with a voice that is authentically yours, at a price point that makes sense for a growing business. The traditional method of manual dubbing is a relic of the past—slow, expensive, and inflexible. The modern approach is instant, affordable, and infinitely scalable.

By partnering with Botomation, you aren't just buying a tool; you are hiring a team of experts who understand how to navigate the complexities of global content distribution. We handle the technical heavy lifting, the linguistic nuance, and the quality assurance, leaving you free to focus on what you do best: creating incredible content. The world is waiting to hear what you have to say. Don't let a language barrier stand in your way any longer.

Ready to automate your growth? Book a free consultation call below.

Expanding a YouTube channel beyond English-speaking borders was once a luxury reserved for the top 0.1% of creators. Historically, reaching audiences in Brazil, Germany, or Japan meant facing a significant financial challenge: professional dubbing costs between $30 and $60 per minute of finished video. However, the emergence of AI voice cloning for video localization has fundamentally changed the landscape for multilingual content creation as of January 2026. For a standard twenty-minute educational video, traditional costs required an investment of $1,200 per language—often before a creator knew if the content would resonate. These prohibitive costs acted as a massive barrier to entry, keeping high-quality information locked behind language walls and preventing talented creators from achieving true global scale.

The landscape has shifted dramatically. AI voice cloning technology has matured to a point where it no longer sounds like a robotic approximation of human speech, but rather a perfect digital twin of the original creator. By utilizing sophisticated neural networks, creators can now reduce their localization expenses by up to 90% while maintaining their unique vocal identity. Instead of hiring a roster of voice actors who sound nothing like the original speaker, our team at Botomation uses advanced systems to clone your specific tone, cadence, and emotional inflection. This ensures that your brand remains consistent whether your viewer is in New York or New Delhi.

With modern solutions like HeyGen now offering support for over 175 languages at a price point of roughly $2.90 to $4.90 per minute, the economics of content creation have changed forever. A creator can now localize an entire month of content for the cost of a single traditionally dubbed video. This guide explores the technical mechanics, financial benefits, and strategic implementation of AI voice cloning for video localization for creators ready to stop leaving millions of views on the table. The era of the "English-only" creator is over, and the era of the global media house has begun, allowing creators to expand YouTube globally with voice cloning more efficiently than ever before.

What is AI Voice Cloning for Video Localization?

AI voice cloning for video localization is not merely a refined version of text-to-speech; it represents a fundamental shift in how we process and replicate human communication. At its core, this technology involves deep learning models that analyze the unique "fingerprint" of a human voice. This includes the fundamental frequency, specific vowel pronunciations, and the rhythmic prosody that makes a voice recognizable. In 2026, these models have reached a level of sophistication where they can translate a script into 175+ languages (via HeyGen) or 140+ languages (via Synthesia) while retaining the original speaker's essence.

The primary benefit of this approach is the preservation of brand equity and maintaining brand voice consistency in multilingual video content. When a viewer subscribes to a channel, they are often connecting with the personality of the creator. If that creator is replaced by a generic voice actor in the translated version, that emotional connection is frequently severed. Our experts at Botomation focus on maintaining this connection by ensuring you maintain voice personality in video localization, carrying the same excitement, gravity, or humor as the original recording. This allows for a seamless transition across cultures, where the only thing that changes is the language, not the character.

How Does AI Dubbing Technology Work?

The process of creating a high-fidelity voice clone begins with data ingestion. We typically require three to five minutes of clean, high-quality source audio from the creator to serve as the training set for the neural network. During this phase, the AI identifies thousands of distinct vocal markers, ranging from subtle breathiness to the specific way a creator might trail off at the end of a sentence. Unlike the rigid algorithms of the past, 2026-era machine learning uses "zero-shot" and "few-shot" learning to adapt to new languages without requiring the creator to speak them.

Once the voice profile is established, the system integrates with advanced translation models, often powered by the latest iterations of GPT-5 or specialized linguistic engines. These engines don't just translate word-for-word; they adapt idioms and cultural nuances to ensure the message remains relevant. The final and most critical step involves lip-syncing. Advanced generative models adjust the visual movements of the speaker's mouth in the original video to match the phonemes of the new language. This eliminates the "uncanny valley" effect, making the localized version look as if it were originally filmed in the target language.

What are the Technical Updates in AI Voice Cloning for 2026?

The technological leaps seen in the last twelve months are staggering. HeyGen v3.0, released in late 2024, introduced a proprietary "Visual Motion Refinement" engine that reduced visual artifacts—those minor glitches around the mouth—by over 60%. This means that even in high-definition 4K videos, the lip-syncing remains crisp and believable. Furthermore, processing speed has increased exponentially. While traditional dubbing could take weeks of back-and-forth with a studio, modern AI workflows allow for processing at 1-5x the video length. A ten-minute video can often be fully dubbed and lip-synced in under an hour.

Privacy and security have also become central themes in the 2026 tech stack. Leading platforms have implemented end-to-end encryption for voice samples to prevent unauthorized usage. For creators working in the European Union, GDPR compliance is now a standard feature, ensuring that biometric data is handled with the highest level of care. Integration has also improved, with direct APIs now connecting these AI engines to YouTube Studio. This allows our team at Botomation to manage a creator's global presence with surgical precision, pushing localized versions to regional channels with the click of a button.

How Do Costs Compare: Traditional Dubbing vs. AI Solutions?

To understand why AI voice cloning for video localization is the superior choice for growth-minded creators, one must analyze the data. Traditional dubbing is a labor-intensive process involving multiple stakeholders: translators, voice actors, studio engineers, and project managers. Each layer adds a significant markup. When factoring in time spent on scheduling and revisions, the traditional method of localizing content becomes a massive anchor on a creator's budget.

In contrast, the automated solutions offered by Botomation leverage technology to strip away these overhead costs. By replacing a manual studio setup with a highly efficient AI pipeline, we can deliver localized content at a fraction of the price. The Polilingua 2024 report highlighted a case where a small business reduced its localization costs from $15,000 to just $1,500 by switching to AI-driven tools. This 90% reduction is now a standard reality for creators embracing modern technology.

Why are Traditional Dubbing Expenses So High?

When hiring a professional voice actor for YouTube video dubbing, you are paying for their expertise, agent fees, and usage rights. Most professional talent will charge between $300 and $400 for every 1,000 words, which equates to roughly six to eight minutes of video content. If your video is twenty minutes long, you are looking at $800 to $1,000 just for the voice talent in a single language.

The costs continue to accumulate when considering technical requirements. Studio rental fees for high-quality environments can range from $200 to $500 per day. Once recorded, an editor must manually sync the audio track to the video, which often adds another 50% to 100% to the total project cost. Expanding into five languages multiplies these expenses by five. For most independent creators, this financial burden makes international expansion nearly impossible through traditional means.

What is the ROI of AI Video Localization?

The pricing model for AI voice cloning for video localization is built for scale. Instead of per-project fees that escalate with every language, platforms like HeyGen offer subscription-based models starting as low as $29 per month. Even at the enterprise level, the cost per minute remains incredibly low—often between $2.90 and $4.90. This allows creators to experiment with new markets like Brazil or Indonesia with virtually zero financial risk. If a specific language doesn't perform well, the loss is negligible compared to traditional methods, especially when testing international market demand with video pilots before a full rollout.

The return on investment (ROI) is clear when examining the break-even point. Based on current YouTube ad rates and average international CPMs, most creators can break even on their localization costs after localizing just five to ten videos. A YouTube channel with 100,000 subscribers recently reported a 340% increase in international views after implementing an AI dubbing strategy. By reaching previously inaccessible audiences, they increased YouTube revenue via international expansion with AI dubbing, unlocking new revenue streams from AdSense and sponsorships.

| Feature | Traditional Dubbing | AI Voice Cloning (Botomation) |

|---|---|---|

| **Cost per Minute** | $30 - $60 | $2.90 - $4.90 |

| **Turnaround Time** | 2 - 4 Weeks | 1 - 2 Hours |

| **Voice Consistency** | Different actor per language | Your actual voice in every language |

| **Scalability** | Linear cost increase | Exponential reach for flat fee |

| **Lip-Sync Quality** | Manual (Expensive) | AI-Automated (Included) |

How to Start Multilingual Content Creation: A Step-by-Step Guide

Navigating AI localization is remarkably straightforward when broken down into manageable phases. The goal is to move from a single-language video to a multi-language global asset without sacrificing quality by following 7 steps to automate YouTube international expansion with voice cloning. While the technology handles the heavy lifting, the human element remains vital. Partnering with an agency like Botomation provides the greatest value here, as we ensure every step is optimized for the best possible output.

The journey begins with your source material. High-quality clones cannot be built on a foundation of poor audio. Once the technical foundation is set, the focus shifts to linguistic accuracy and visual harmony. By following a structured workflow, you can transform your channel into a global powerhouse in a matter of days.

How Should You Prepare Source Material for AI?

The first step is ensuring your source audio is pristine. AI models are highly sensitive; if your original recording has background hiss or echo, those artifacts may be amplified in the cloned version. We recommend using a high-quality cardioid or condenser microphone in a treated room. You should also provide a clear, timestamped transcript. While AI can auto-generate transcripts, having a verified text base ensures the translation engine doesn't misinterpret technical jargon or brand names.

Selecting the right training data is equally important. You don't need to upload your entire library; instead, choose three to five minutes of your best speaking segments where your energy is high and articulation is clear. This "golden sample" allows the AI to capture the essence of your personality. Once the voice is trained, the next phase is translation. Achieving cost-effective video translation often involves using AI tools, but our team at Botomation often performs a "cultural sanity check" to ensure that metaphors or references remain appropriate in the target culture.

How Do You Use HeyGen for YouTube Video Dubbing?

Once preparation is complete, the dubbing process occurs within specialized AI voice dubbing tools for multilingual YouTube videos. HeyGen has become the industry standard for creators in 2026 due to its massive language library and intuitive interface. After uploading your video and the translated script, you simply select your cloned voice profile. The system then generates the new audio track and begins the task of re-animating the speaker's mouth movements to match the new phonemes.

"The magic of AI localization isn't just in the translation; it's in the preservation of the creator's identity across borders." — Senior Consultant, Botomation.

After the AI has finished, a thorough review is necessary. You should look for lip-sync accuracy and "emotional matching." Does the voice sound excited when the creator is gesturing? At Botomation, our experts fine-tune these parameters to ensure the final product feels natural. Once finalized, the video can be uploaded to regional YouTube channels or added as an additional audio track on the primary video using YouTube’s multi-language audio feature.

What Real-World Results are Creators Seeing?

The theoretical benefits of AI voice cloning for video localization are impressive, but the real-world results are even more compelling. Across various sectors—from entertainment to education—the adoption of AI localization is fundamentally changing content consumption. Major players like Netflix have already integrated these technologies into workflows for non-primary markets, reporting cost reductions of up to 80% while maintaining high quality.

For the independent YouTube creator, these tools are a "force multiplier." They allow a single person or small team to compete with global media conglomerates. We have seen creators move from being local celebrities to becoming household names internationally, simply by making their content accessible to those who don't speak their native tongue.

Which YouTube Creators Have Successfully Scaled Globally?

One striking example comes from a gaming YouTuber with two million subscribers who focused primarily on the English-speaking market. By partnering with Botomation to localize their content into fifteen languages—including Spanish, Portuguese, and Japanese—they saw an influx of 400,000 new international subscribers in six months. The cost of this expansion was less than $1,000 per month, a figure quickly eclipsed by the surge in international ad revenue.

Educational channels have seen even more dramatic results, often utilizing voice cloning for educational YouTube videos to scale their reach without expensive re-recording sessions. A channel focused on STEM subjects increased its total watch time by 250% after dubbing its library into Spanish. Because educational content is often search-driven, having localized titles, descriptions, and audio tracks allowed them to dominate search results in Latin America. Even smaller creators are seeing success; one creator invested just $50 a month in AI dubbing for five languages and saw their international audience grow from 5% of their total views to over 30% in a single quarter.

How Does This Benefit Small Businesses and Educators?

Beyond entertainment, small businesses and e-learning companies are reaping massive rewards. A European e-learning firm specializing in technical certifications struggled to break into the Asian market due to the high cost of manual dubbing. After learning how to localize online courses using AI voice, they localized their entire course catalog into eight languages. The result was a 180% increase in course completion rates, as students were finally able to learn in their native language.

Corporate training has also been revolutionized. Large companies with global workforces often struggle to maintain consistent training standards across different regions. By using AI voice cloning for video localization, they ensure that a message from the CEO or a safety briefing sounds exactly the same in every office worldwide. This has led to a 90% increase in employee engagement in non-English speaking regions. Delivering a message in the "original voice" of the leader, but in the language of the employee, creates a sense of unity that text-based translations cannot match.

Which AI Video Localization Tool is Right for You?

With many options available in 2026, selecting the right tool is a critical task. The "best" tool depends on your specific goals, budget, and content volume. While some platforms excel at high-end cinematic lip-syncing, others are better suited for quick marketing clips. It is important to look beyond marketing claims and focus on technical benchmarks that impact the viewer experience.

At Botomation, we constantly test these platforms to ensure our clients use the most efficient tech stack. We evaluate factors like "naturalness" scores, processing latency, and the breadth of language support. While a tool might offer 100 languages, if the quality of the Polish or Thai dub is subpar, it can damage your brand's reputation in those regions.

How Do HeyGen, Synthesia, and GeckoDub Compare?

HeyGen remains the frontrunner for most YouTube creators because of its specialized focus on video-to-video translation and lip-syncing. With support for over 175 languages and a 90% cost reduction compared to traditional methods, it is the most versatile option for large-scale content libraries. Its v3.0 update has set a new bar for visual fidelity that competitors are still working to meet.

Synthesia, by contrast, is heavily focused on the enterprise market. While it offers 140+ languages and strong API integrations, it is often used for creating videos from scratch using digital avatars rather than localizing existing footage. GeckoDub has carved out a niche in marketing, offering incredible processing speeds—often finishing a video in the time it takes to watch it. For creators on a tight budget, RecCloud offers a basic service for around 60 languages, though the lip-syncing is noticeably less sophisticated than premium options.

Which Quality Metrics Matter Most for Your Channel?

When evaluating these platforms, we use several key performance indicators (KPIs). The first is the "Naturalness Score." In recent 2024 surveys, HeyGen scored a 4.8 out of 5 for vocal naturalness, while budget competitors often hovered around 4.2. This small difference is the gap between a viewer staying for the whole video or clicking away because the voice sounds artificial.

Processing speed is another critical factor. Advanced platforms can now handle a ten-minute video in roughly 30 to 60 minutes. Compare this to the two to three days required for even the fastest traditional studios, and the efficiency gain is clear. Finally, we look at lip-sync accuracy. Top-tier platforms maintain a 95% accuracy rate, meaning mouth movements are virtually indistinguishable from a native speaker. This high level of quality is why HeyGen reports a 92% retention rate among professional content creators.

Frequently Asked Questions

Does AI voice cloning sound robotic?

Not in 2026. Modern neural networks capture the subtle nuances of human speech, including breathing, emotional tone, and specific accents. When properly trained with high-quality source audio, the resulting clone is virtually indistinguishable from the original speaker to the average listener.

Is it difficult to set up the lip-syncing?

If you are doing it yourself, there is a learning curve to ensure visual artifacts are minimized. However, when you partner with Botomation, our experts handle the technical alignment. The AI does the heavy lifting, and we provide the quality control to ensure the mouth movements look natural in every frame.

How much can I actually save compared to a studio?

The savings are typically around 90%. While a professional studio might charge $1,200 for a twenty-minute video in one language, an AI-driven approach through our agency costs a fraction of that, allowing you to localize into ten languages for the price of one traditional dub.

Can I use my own voice for the translations?

Yes, that is the primary advantage of voice cloning. The technology takes your unique vocal characteristics and applies them to the translated text. Your audience in France or Germany will hear your voice speaking their language, which is essential for maintaining brand consistency.

Is this technology legal and ethical?

Yes, provided you have the rights to the voice being cloned. At Botomation, we only work with creators who provide their own voice samples. We also prioritize platforms that use high-level encryption and follow strict data privacy regulations like GDPR to protect your biometric data.

The decision to go global is no longer a question of "if" but "when." The barriers that once kept creators confined to their native languages have been dismantled by the power of AI voice cloning for video localization. You can now reach billions of potential viewers with a voice that is authentically yours, at a price point that makes sense for a growing business. The traditional method of manual dubbing is a relic of the past—slow, expensive, and inflexible. The modern approach is instant, affordable, and infinitely scalable.

By partnering with Botomation, you aren't just buying a tool; you are hiring a team of experts who understand how to navigate the complexities of global content distribution. We handle the technical heavy lifting, the linguistic nuance, and the quality assurance, leaving you free to focus on what you do best: creating incredible content. The world is waiting to hear what you have to say. Don't let a language barrier stand in your way any longer.

Ready to automate your growth? Book a free consultation call below.

Get Started

Book a FREE Consultation Right NOW!

Schedule a Call with Our Team To Make Your Business More Efficient with AI Instantly.

Read More

AI Voice Cloning for Video Localization - Save 90% in 2026

Save 90% using AI voice cloning for video localization. This 2026 guide for YouTube creators covers HeyGen, dubbing costs, and global brand growth.

How to Localize Online Courses Using AI Voice 2026 Guide

Learn how WhatsApp AI slashes support costs for e-commerce & SaaS. Proven strategies to boost sales, recover carts, and scale 24/7 service.