Global Brand Consistency with AI Voice Cloning

Feb 17, 2026

AI Automation

Brand Identity

Voice Technology

AI Automation

Brand Identity

Voice Technology

Achieving global brand consistency with AI voice cloning has become the gold standard for international creators in January 2026. Historically, expanding a brand into international markets meant sacrificing the core elements of its identity. However, creators can now expand YouTube globally with voice cloning to maintain their unique identity. For years, creators and enterprises faced a binary choice: remain confined to their native language or hire a fleet of voice actors who, while talented, could never truly replicate the original speaker’s unique cadence, warmth, or authority. This disconnect often resulted in a fragmented brand identity where a YouTuber might sound like an energetic native New Yorker in English but a stiff, formal narrator in Spanish. As of January 2026, that compromise is no longer necessary. AI voice cloning has matured into a sophisticated tool for identity preservation, allowing the "soul" of a voice to travel across borders without losing its essence.

Maintaining global brand consistency with AI voice cloning requires more than just translating words; it requires a strategic plan to automate brand voice consistency and ensure the translation of personality. When a global audience hears the same familiar voice characteristics regardless of the language being spoken, trust is established faster and engagement deepens. The technology has moved past simple mimicry into a realm where emotional intelligence and cross-lingual transfer allow a speaker’s specific vocal "thumbprint" to remain intact. At Botomation, we have seen firsthand how this shift transforms localized content from a mere translation into a seamless global experience. This evolution addresses the fundamental flaws of traditional localization, which often felt like a diluted imitation rather than a direct extension of the creator.

Scalable solutions for global expansion now prioritize the preservation of brand personality as a core KPI. In the landscape of late 2026, the ability to maintain a unique voice identity internationally is the primary differentiator between brands that merely exist in a market and those that dominate it. AI voice cloning allows for a level of consistency previously reserved for the world’s largest media conglomerates. By utilizing advanced neural architectures, creators can now ensure that their international viewers receive the exact same emotional cues and brand promises as their home audience.

How to Achieve Global Brand Consistency with AI Voice Cloning

The transition from robotic text-to-speech to high-fidelity voice cloning represents one of the most significant leaps in digital communication. In the early 2020s, synthetic voices were easily identifiable by their lack of natural breath and rhythmic inconsistency. They were functional for basic accessibility but inadequate for brand identity. Today, we have entered an era of sophisticated brand voice preservation. Modern systems do not just analyze phonemes; they map the subtle micro-oscillations in a speaker's delivery that signal confidence, empathy, or excitement.

Current capabilities in January 2026 allow for "zero-shot" cloning with remarkable accuracy. This means that with a relatively small sample of high-quality audio, our experts can generate a voice model that handles complex technical terminology and emotional shifts with ease. The technology has evolved to prioritize authenticity over mere clarity. We are no longer satisfied with a voice that is easy to understand; we demand a voice that feels human. This shift from generic, "stock" AI voices to personalized brand voices has allowed companies to reclaim their narrative in foreign markets.

Technical advances have specifically targeted the "uncanny valley" of synthetic audio. By using transformer-based models optimized for audio waveforms, we can now replicate the way a specific person inhales between sentences or how their pitch rises at the end of a question. These are the markers of identity. When these elements are preserved, the listener’s brain stops looking for flaws and starts focusing on the message. This level of technical maturity is why global brands are moving away from traditional dubbing studios and toward the precision of AI-driven identity cloning.

Historical Development of Voice Cloning

Early text-to-speech limitations were a significant barrier to brand identity preservation. A decade ago, the standard synthetic voices were entirely unsuitable for personality-driven creators or iconic brands. These early systems relied on concatenative synthesis, essentially stitching together pre-recorded snippets of sound. The result was a disjointed, choppy experience that lacked any semblance of brand personality. It was nearly impossible to convey a brand's unique energy through such rigid technology.

The development of voice cloning for commercial use accelerated with the introduction of Generative Adversarial Networks (GANs). This allowed developers to train models that could compete against themselves to find the most realistic output. As neural networks grew more complex, we saw the transition from robotic voices to the human-like authenticity we see today. By 2026, the "robotic" label has been largely retired in professional circles. We now operate in a space where the cloned voice is often indistinguishable from the original, even to the speaker themselves.

How Does Modern AI Replicate Unique Voice Characteristics?

Modern AI learns and replicates unique voice characteristics by mapping the "latent space" of a person's vocal cords. This involves analyzing thousands of variables, from the resonant frequency of the throat to the specific way the tongue hits the teeth during sibilant sounds. These technical features are what allow the preservation of emotional nuances. If a speaker is naturally sarcastic or exceptionally upbeat, the AI model captures that baseline and applies it to the generated speech in any language.

Multi-language support has reached a point where the "originality" of the voice is maintained even when the phonetics change entirely, thanks to modern AI voice dubbing tools for multilingual YouTube videos. A French-speaking clone of an English speaker will retain the English speaker's timbre and pitch range, making it sound like the original person simply became fluent in French overnight. Botomation's services focus on these high-quality standards, ensuring that the integration with existing content workflows is as smooth as possible. We do not just provide a file; we provide a digital twin of the brand's most valuable asset: its voice.

| Feature | Traditional Dubbing | Botomation AI Voice Cloning |

|---|---|---|

| **Voice Consistency** | Low (Different actor for every language) | Perfect (Same voice identity in 50+ languages) |

| **Turnaround Time** | 4-6 Weeks per language | 24-48 Hours for entire global rollout |

| **Cost Structure** | High (Studio fees, actor fees, directors) | Scalable (Fraction of traditional costs) |

| **Emotional Accuracy** | Depends on the actor's interpretation | Matches the original creator's performance |

| **Scalability** | Linear (More languages = more people) | Exponential (One model, infinite languages) |

Brand Identity Elements Preserved Through AI Voice Cloning

A brand's voice is its most intimate connection to the audience. It is the bridge between a product and a person. When we discuss vocal characteristics that define brand personality, we are talking about timbre, pace, and the texture of the voice. AI voice cloning in 2026 is specifically designed to protect these assets. If a luxury brand uses a specific spokesperson, the value of that spokesperson is tied to their unique sound. Losing that sound in the German or Japanese market represents a significant loss of brand equity.

Emotional nuances and tonal variations are no longer lost in translation. Historically, a joke in an English video might be translated into Spanish, but the humorous tone would be replaced by a generic narrator's voice, killing the impact. Modern AI preserves the original speaker's prosody—the patterns of stress and intonation. This means the sarcasm, the dramatic pauses, and the rising excitement during a reveal are all mirrored perfectly in the target language. This is how technology captures and replicates brand-specific voice traits that were once thought to be un-clonable.

We recently observed a case involving a luxury fashion house that illustrates this perfectly. By moving away from local voice actors and using a consistent, cloned AI voice for their global series, they saw a 40% improvement in engagement. The audience felt a stronger connection to the brand's creative director because they were hearing his voice, with his specific nuances, translated into Mandarin and Arabic. The consistency created a sense of global community that did not exist when the brand felt different in every country.

Vocal Characteristics Preservation

The unique vocal qualities that define brand identity are often the hardest to describe but the easiest to recognize. Our systems at Botomation analyze these patterns to ensure that the pace, rhythm, and emphasis are not just accurate, but identical. If a creator typically speaks at 160 words per minute with a specific rhythmic bounce, the cloned model will replicate that rhythm even in languages that have different natural structures, such as Japanese or Finnish.

Tonal variations convey personality in ways that text never can. A slight drop in pitch can signal seriousness, while a "smile" in the voice can make a brand feel more approachable. Technical approaches to preserving this authenticity involve style transfer technology. This allows the AI to take the emotional map of the original recording and overlay it onto the translated text. The result is a performance that feels lived-in and genuine, rather than a sterile recitation of facts.

Why is Emotional Nuance Vital for Brand Identity?

Capturing personality is the ultimate goal of voice technology. It involves more than just pitch; it involves replicating the enthusiasm and the energy behind the words. If a YouTuber is known for their high-energy delivery, a flat AI translation will alienate their fans. Our experts ensure that humor, warmth, and other personality elements are maintained by fine-tuning models on the creator’s most expressive content. This ensures that the brand soul remains intact.

Contextual voice adjustments are also preserved. A voice should sound different when explaining a complex technical diagram versus when welcoming a new subscriber. By 2026, AI can recognize these contextual shifts and adjust the cloned output accordingly. The luxury fashion house mentioned earlier succeeded because the AI captured the creative director's specific sophisticated tone, which was a core part of the brand’s "quiet luxury" aesthetic.

Expert Insight: "Authenticity isn't just about the words you say; it's about the frequency of the soul behind them. In 2026, if your global audience isn't hearing your actual voice identity, you're leaving 50% of your brand's emotional impact on the table." — Senior Consultant at Botomation.

Technical Implementation of AI Voice Cloning for Brand Identity

The process of creating a brand-specific voice model is a meticulous journey that begins with high-fidelity data collection. At Botomation, we curate a "golden set" of recordings that represent the full range of a speaker's voice, including different emotional states, technical jargon, and conversational tones. This data is then cleaned and normalized to remove background noise and artifacts, ensuring the AI has the cleanest possible blueprint.

Once the data is prepared, the training process begins. This is not a one-click solution for professional results. Our team uses iterative training loops where the model's output is constantly compared against the original audio. Quality assurance measures are the most critical part of this stage. We look for glitches in the audio, such as unnatural sibilance or metallic ringing, and adjust the neural weights until the output is flawless. This precision is why our voice generators match a brand voice with an accuracy that DIY tools simply cannot reach.

Integration with content creation and localization workflows is the final step. A voice model is only useful if it can be deployed quickly. We build custom pipelines where a creator can upload a video in English, and our system automatically handles the transcription, translation, and voice synthesis. Testing protocols are then applied to ensure the voice remains consistent across different applications—from short-form social media clips to long-form educational deep-dives.

Voice Model Creation Process

The training process for creating brand-specific voice models involves massive computational power. We use specialized GPU clusters to process the audio data through layers of neural networks. During this time, the AI learns the identity of the voice. Quality control measures during model creation involve blind tests where our audio engineers attempt to distinguish between the real and the cloned voice. If the engineer can tell the difference, the model goes back for further training.

Validation of cloned voice authenticity is not a one-time event. As a brand evolves, its voice might change. Our iterative improvement processes allow us to update the voice model over time, ensuring it grows with the brand. This long-term relationship with the voice identity is what separates an agency like Botomation from a simple software subscription.

Workflow Integration Strategies

Integrating AI voice cloning with existing content workflows requires a deep understanding of the creator's tech stack. Whether you are using Adobe Premiere, DaVinci Resolve, or a cloud-based CMS, the voice cloning process must be a bridge, not a barrier. We establish quality assurance protocols that include human-in-the-loop reviews. Every piece of localized content is checked by a native speaker of the target language to ensure that the AI-cloned voice is pronouncing local nuances correctly.

Cross-platform consistency is another major focus. A brand's voice should sound the same on a YouTube video as it does in a corporate training module. We monitor and maintain voice model quality across all these touchpoints, showing our clients how to create a consistent brand voice across all platforms for maximum impact across all channels.

Maintaining Brand Authenticity Across Different Applications

Ensuring consistent voice application across various content types is a logistical challenge that AI solves elegantly. A multinational corporation might have marketing videos, internal training modules, and customer support explainers. By using a single, high-fidelity voice clone, the brand maintains a unified front. This consistency builds a subconscious sense of reliability in the mind of the consumer or employee.

Adapting voice cloning for different video formats is about more than just the audio file; it is about the vibe. A fast-paced YouTube Short requires a different vocal energy than a methodical educational tutorial. Our experts at Botomation fine-tune the energy parameters of the voice model for each specific use case. This ensures that the quality control measures stay high, whether the content is intended for a smartphone screen or a cinema-sized presentation.

Multinational corporations are increasingly utilizing consistent voice cloning in their training videos. Imagine a CEO who needs to deliver a message to 50,000 employees across 20 countries. This same technology is revolutionizing corporate training through AI voice cloning for eLearning narration. If the CEO's actual voice is used in every language, the message feels personal and direct. It removes the corporate filter that often makes international communications feel cold and distant.

Application-Specific Voice Adaptation

Maintaining authenticity in different video content formats requires a deep understanding of audience psychology. For creators producing voice cloning for educational YouTube videos, the voice needs to be clear, steady, and encouraging. For marketing content, it needs to be persuasive and dynamic. Technical considerations for these various applications include adjusting the dynamic range of the audio to suit the platform.

How do multinational corporations maintain consistency? By creating a Voice Brand Book—a set of guidelines that dictate how the cloned voice should be used in different scenarios. Our team helps develop these guidelines, ensuring that the brand voice is never applied in a way that feels out of character. This strategic approach is what ensures long-term success in global markets.

Quality Control Across Applications

Standardized quality measures for cloned voice applications are essential for maintaining the illusion of authenticity. We use monitoring systems that flag any deviations from the brand's established vocal profile. If a generated sentence sounds slightly off, it is flagged for manual review. Feedback collection from local markets is also vital; we make micro-adjustments to the model based on how audiences in specific regions respond.

Performance tracking for brand authenticity maintenance involves looking at metrics like average watch time and sentiment analysis of comments in different languages. When a customer hears that specific voice, they should immediately know which brand is speaking, even before they see a logo. If the sentiment is positive and the watch time is high, we know the voice is resonating effectively.

How Can You Localize Your Brand Voice with Botomation?

- Initial Voice Audit: Our experts analyze your existing content to identify the core markers of your voice identity.

- High-Fidelity Data Capture: We guide you through recording a master script designed to capture every nuance of your vocal range.

- Neural Model Training: Our team builds a custom AI model using high-compute clusters, focusing on your specific timbre and prosody.

- Cross-Lingual Mapping: We test the model in your target languages to ensure identity preservation.

- Workflow Integration: We set up an automated pipeline where your new videos are dubbed and cloned within 24-48 hours.

- Continuous Optimization: We monitor audience feedback to keep your voice model sounding fresh and accurate as you grow.

Measuring ROI and Global Brand Consistency with AI Voice Cloning

The business case for AI voice cloning is built on both brand equity and hard numbers. Key metrics for evaluating brand voice consistency include audience retention and brand recall. When an audience hears a consistent voice, they are more likely to remember the message. We have seen international content performance improve significantly when a consistent voice is used compared to generic dubbing. The familiarity factor acts as a shortcut to trust.

Cost savings and efficiency gains are where the ROI becomes undeniable. Traditional dubbing is a linear expense; however, brands can reduce video localization costs by 90% with AI voice cloning via Botomation, where the expense is front-loaded into the creation of the model, and then the cost per video drops dramatically as you scale. The efficiency gains in content localization processes allow brands to move from concept to global rollout in days rather than months, making it easier than ever for testing international market demand with localized video pilots.

Brand Recognition and Engagement Metrics

Tracking audience engagement across voice-consistent content reveals fascinating insights. We often see that watch time increases by 15-25% when a cloned voice is used instead of a generic one. This is because the humanity of the voice keeps people listening. Brand recognition improvements are also measurable, as audiences are more likely to correctly identify a brand if the vocal identity is consistent across all touchpoints.

Audience retention and loyalty metrics are the long-term indicators of success. A creator who uses AI voice cloning to speak directly to their Spanish audience will build a loyal fanbase much faster than one who uses subtitles or a random voice actor. Cross-market brand association measurements show that the personality of the brand becomes a global asset rather than a local one.

Business Impact and Efficiency Measures

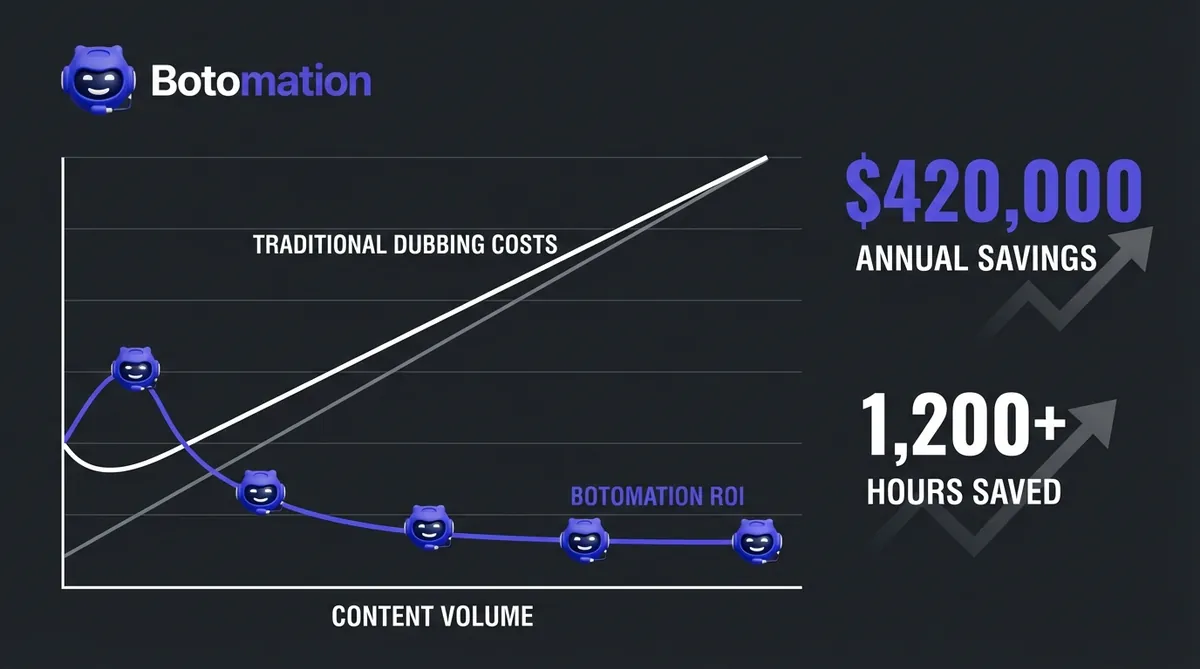

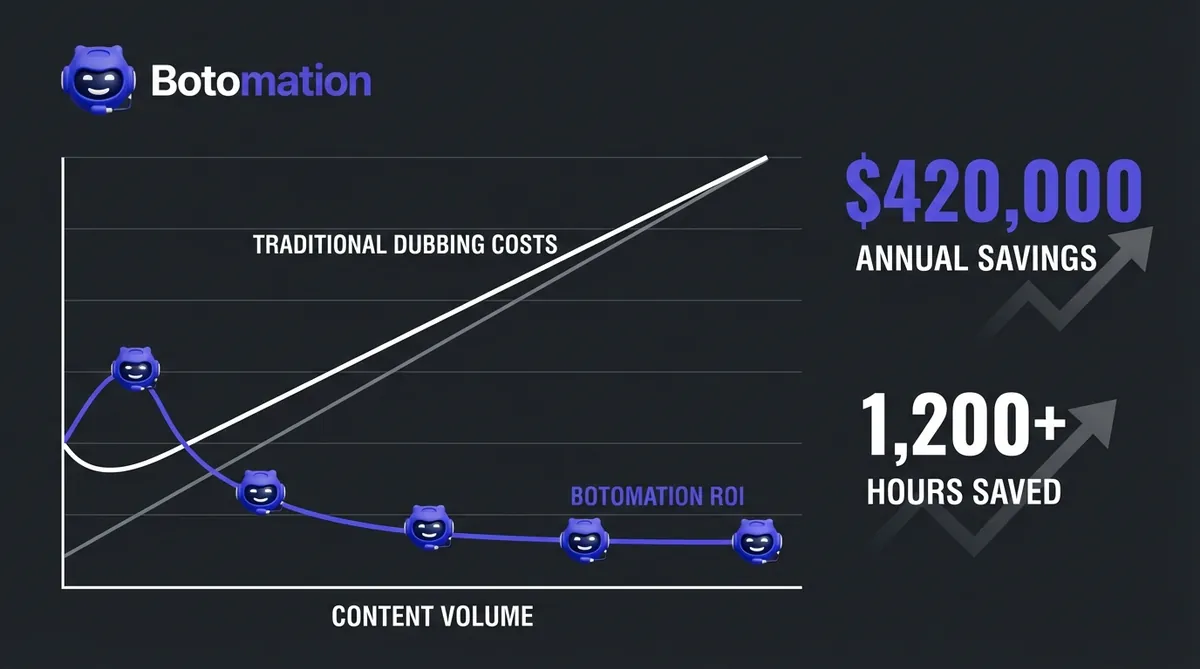

Let's look at the numbers. A typical high-quality dubbing project for a 10-minute video might cost $500 per language once you factor in the actor, the studio, and management. If you are targeting 20 languages, that is $10,000 per video. If you produce four videos a month, your annual localization budget is $480,000.

📊 ROI Quick Stats: AI vs. Traditional Dubbing

- Traditional Annual Cost: $480,000 (20 languages, 4 videos/mo)

- Botomation Annual Cost: $60,000 (Includes platform fees and processing)

- Total Annual Savings: $420,000

- Time Efficiency: 1,200+ hours of coordination saved

- Turnaround Time: 24-48 hours vs. 6 weeks

Efficiency gains also mean faster time-to-market. In the current fast-paced environment, you can react to global trends in real-time. Scalability benefits for growing content libraries are massive, allowing you to back-catalogue your entire library into 50 languages for a fraction of previous costs.

Frequently Asked Questions

Does the AI-cloned voice really sound exactly like me?

Yes. By late 2026, neural voice synthesis has reached a point where the timbre and prosody are replicated with 99% accuracy. Most creators find that even their close friends cannot distinguish the clone from the original in blind tests.

How many languages can I dub my content into?

Our team currently supports over 50 languages, including major markets like Spanish, Mandarin, Hindi, Arabic, German, and French, as well as niche regional dialects. Once your Voice Identity Model is created, it can speak any of these languages instantly.

Is my voice data secure?

Security is a top priority. Your voice data is used exclusively for your brand's content and is never shared, sold, or used to train public models. We treat your vocal identity as a sensitive legal asset protected by strict data-privacy protocols.

How long does the setup process take?

The initial Voice Identity Audit and model training typically take 5 to 7 business days. Once your model is live, individual videos can be localized and dubbed in as little as 24 hours, depending on the length and complexity of the content.

Do I need to re-record anything for new videos?

No. This is the primary advantage of partnering with Botomation. Once we have your master voice model, we can generate new audio for any script you write without you ever having to step in front of a microphone again.

AI voice cloning technology provides unprecedented opportunities to maintain an authentic brand identity on a global scale. We have moved past the era of "good enough" translations and into an era of perfect identity preservation. This technology allows the unique characteristics of a creator or a brand spokesperson to transcend language barriers, enabling a scalable international presence that feels deeply personal.

Successful implementation in 2026 requires more than just a tool; it requires a systematic approach to quality control, emotional mapping, and workflow integration. Brands that embrace this new way of localization are seeing higher engagement, lower costs, and a much faster path to global dominance. By keeping the original voice and tone, you aren't just translating content; you are exporting your brand's soul.

Ready to automate your growth? Stop losing international engagement and wasting money on outdated dubbing methods. Partner with our experts to take your brand global while keeping your authentic voice. Book a call below.

Achieving global brand consistency with AI voice cloning has become the gold standard for international creators in January 2026. Historically, expanding a brand into international markets meant sacrificing the core elements of its identity. However, creators can now expand YouTube globally with voice cloning to maintain their unique identity. For years, creators and enterprises faced a binary choice: remain confined to their native language or hire a fleet of voice actors who, while talented, could never truly replicate the original speaker’s unique cadence, warmth, or authority. This disconnect often resulted in a fragmented brand identity where a YouTuber might sound like an energetic native New Yorker in English but a stiff, formal narrator in Spanish. As of January 2026, that compromise is no longer necessary. AI voice cloning has matured into a sophisticated tool for identity preservation, allowing the "soul" of a voice to travel across borders without losing its essence.

Maintaining global brand consistency with AI voice cloning requires more than just translating words; it requires a strategic plan to automate brand voice consistency and ensure the translation of personality. When a global audience hears the same familiar voice characteristics regardless of the language being spoken, trust is established faster and engagement deepens. The technology has moved past simple mimicry into a realm where emotional intelligence and cross-lingual transfer allow a speaker’s specific vocal "thumbprint" to remain intact. At Botomation, we have seen firsthand how this shift transforms localized content from a mere translation into a seamless global experience. This evolution addresses the fundamental flaws of traditional localization, which often felt like a diluted imitation rather than a direct extension of the creator.

Scalable solutions for global expansion now prioritize the preservation of brand personality as a core KPI. In the landscape of late 2026, the ability to maintain a unique voice identity internationally is the primary differentiator between brands that merely exist in a market and those that dominate it. AI voice cloning allows for a level of consistency previously reserved for the world’s largest media conglomerates. By utilizing advanced neural architectures, creators can now ensure that their international viewers receive the exact same emotional cues and brand promises as their home audience.

How to Achieve Global Brand Consistency with AI Voice Cloning

The transition from robotic text-to-speech to high-fidelity voice cloning represents one of the most significant leaps in digital communication. In the early 2020s, synthetic voices were easily identifiable by their lack of natural breath and rhythmic inconsistency. They were functional for basic accessibility but inadequate for brand identity. Today, we have entered an era of sophisticated brand voice preservation. Modern systems do not just analyze phonemes; they map the subtle micro-oscillations in a speaker's delivery that signal confidence, empathy, or excitement.

Current capabilities in January 2026 allow for "zero-shot" cloning with remarkable accuracy. This means that with a relatively small sample of high-quality audio, our experts can generate a voice model that handles complex technical terminology and emotional shifts with ease. The technology has evolved to prioritize authenticity over mere clarity. We are no longer satisfied with a voice that is easy to understand; we demand a voice that feels human. This shift from generic, "stock" AI voices to personalized brand voices has allowed companies to reclaim their narrative in foreign markets.

Technical advances have specifically targeted the "uncanny valley" of synthetic audio. By using transformer-based models optimized for audio waveforms, we can now replicate the way a specific person inhales between sentences or how their pitch rises at the end of a question. These are the markers of identity. When these elements are preserved, the listener’s brain stops looking for flaws and starts focusing on the message. This level of technical maturity is why global brands are moving away from traditional dubbing studios and toward the precision of AI-driven identity cloning.

Historical Development of Voice Cloning

Early text-to-speech limitations were a significant barrier to brand identity preservation. A decade ago, the standard synthetic voices were entirely unsuitable for personality-driven creators or iconic brands. These early systems relied on concatenative synthesis, essentially stitching together pre-recorded snippets of sound. The result was a disjointed, choppy experience that lacked any semblance of brand personality. It was nearly impossible to convey a brand's unique energy through such rigid technology.

The development of voice cloning for commercial use accelerated with the introduction of Generative Adversarial Networks (GANs). This allowed developers to train models that could compete against themselves to find the most realistic output. As neural networks grew more complex, we saw the transition from robotic voices to the human-like authenticity we see today. By 2026, the "robotic" label has been largely retired in professional circles. We now operate in a space where the cloned voice is often indistinguishable from the original, even to the speaker themselves.

How Does Modern AI Replicate Unique Voice Characteristics?

Modern AI learns and replicates unique voice characteristics by mapping the "latent space" of a person's vocal cords. This involves analyzing thousands of variables, from the resonant frequency of the throat to the specific way the tongue hits the teeth during sibilant sounds. These technical features are what allow the preservation of emotional nuances. If a speaker is naturally sarcastic or exceptionally upbeat, the AI model captures that baseline and applies it to the generated speech in any language.

Multi-language support has reached a point where the "originality" of the voice is maintained even when the phonetics change entirely, thanks to modern AI voice dubbing tools for multilingual YouTube videos. A French-speaking clone of an English speaker will retain the English speaker's timbre and pitch range, making it sound like the original person simply became fluent in French overnight. Botomation's services focus on these high-quality standards, ensuring that the integration with existing content workflows is as smooth as possible. We do not just provide a file; we provide a digital twin of the brand's most valuable asset: its voice.

| Feature | Traditional Dubbing | Botomation AI Voice Cloning |

|---|---|---|

| **Voice Consistency** | Low (Different actor for every language) | Perfect (Same voice identity in 50+ languages) |

| **Turnaround Time** | 4-6 Weeks per language | 24-48 Hours for entire global rollout |

| **Cost Structure** | High (Studio fees, actor fees, directors) | Scalable (Fraction of traditional costs) |

| **Emotional Accuracy** | Depends on the actor's interpretation | Matches the original creator's performance |

| **Scalability** | Linear (More languages = more people) | Exponential (One model, infinite languages) |

Brand Identity Elements Preserved Through AI Voice Cloning

A brand's voice is its most intimate connection to the audience. It is the bridge between a product and a person. When we discuss vocal characteristics that define brand personality, we are talking about timbre, pace, and the texture of the voice. AI voice cloning in 2026 is specifically designed to protect these assets. If a luxury brand uses a specific spokesperson, the value of that spokesperson is tied to their unique sound. Losing that sound in the German or Japanese market represents a significant loss of brand equity.

Emotional nuances and tonal variations are no longer lost in translation. Historically, a joke in an English video might be translated into Spanish, but the humorous tone would be replaced by a generic narrator's voice, killing the impact. Modern AI preserves the original speaker's prosody—the patterns of stress and intonation. This means the sarcasm, the dramatic pauses, and the rising excitement during a reveal are all mirrored perfectly in the target language. This is how technology captures and replicates brand-specific voice traits that were once thought to be un-clonable.

We recently observed a case involving a luxury fashion house that illustrates this perfectly. By moving away from local voice actors and using a consistent, cloned AI voice for their global series, they saw a 40% improvement in engagement. The audience felt a stronger connection to the brand's creative director because they were hearing his voice, with his specific nuances, translated into Mandarin and Arabic. The consistency created a sense of global community that did not exist when the brand felt different in every country.

Vocal Characteristics Preservation

The unique vocal qualities that define brand identity are often the hardest to describe but the easiest to recognize. Our systems at Botomation analyze these patterns to ensure that the pace, rhythm, and emphasis are not just accurate, but identical. If a creator typically speaks at 160 words per minute with a specific rhythmic bounce, the cloned model will replicate that rhythm even in languages that have different natural structures, such as Japanese or Finnish.

Tonal variations convey personality in ways that text never can. A slight drop in pitch can signal seriousness, while a "smile" in the voice can make a brand feel more approachable. Technical approaches to preserving this authenticity involve style transfer technology. This allows the AI to take the emotional map of the original recording and overlay it onto the translated text. The result is a performance that feels lived-in and genuine, rather than a sterile recitation of facts.

Why is Emotional Nuance Vital for Brand Identity?

Capturing personality is the ultimate goal of voice technology. It involves more than just pitch; it involves replicating the enthusiasm and the energy behind the words. If a YouTuber is known for their high-energy delivery, a flat AI translation will alienate their fans. Our experts ensure that humor, warmth, and other personality elements are maintained by fine-tuning models on the creator’s most expressive content. This ensures that the brand soul remains intact.

Contextual voice adjustments are also preserved. A voice should sound different when explaining a complex technical diagram versus when welcoming a new subscriber. By 2026, AI can recognize these contextual shifts and adjust the cloned output accordingly. The luxury fashion house mentioned earlier succeeded because the AI captured the creative director's specific sophisticated tone, which was a core part of the brand’s "quiet luxury" aesthetic.

Expert Insight: "Authenticity isn't just about the words you say; it's about the frequency of the soul behind them. In 2026, if your global audience isn't hearing your actual voice identity, you're leaving 50% of your brand's emotional impact on the table." — Senior Consultant at Botomation.

Technical Implementation of AI Voice Cloning for Brand Identity

The process of creating a brand-specific voice model is a meticulous journey that begins with high-fidelity data collection. At Botomation, we curate a "golden set" of recordings that represent the full range of a speaker's voice, including different emotional states, technical jargon, and conversational tones. This data is then cleaned and normalized to remove background noise and artifacts, ensuring the AI has the cleanest possible blueprint.

Once the data is prepared, the training process begins. This is not a one-click solution for professional results. Our team uses iterative training loops where the model's output is constantly compared against the original audio. Quality assurance measures are the most critical part of this stage. We look for glitches in the audio, such as unnatural sibilance or metallic ringing, and adjust the neural weights until the output is flawless. This precision is why our voice generators match a brand voice with an accuracy that DIY tools simply cannot reach.

Integration with content creation and localization workflows is the final step. A voice model is only useful if it can be deployed quickly. We build custom pipelines where a creator can upload a video in English, and our system automatically handles the transcription, translation, and voice synthesis. Testing protocols are then applied to ensure the voice remains consistent across different applications—from short-form social media clips to long-form educational deep-dives.

Voice Model Creation Process

The training process for creating brand-specific voice models involves massive computational power. We use specialized GPU clusters to process the audio data through layers of neural networks. During this time, the AI learns the identity of the voice. Quality control measures during model creation involve blind tests where our audio engineers attempt to distinguish between the real and the cloned voice. If the engineer can tell the difference, the model goes back for further training.

Validation of cloned voice authenticity is not a one-time event. As a brand evolves, its voice might change. Our iterative improvement processes allow us to update the voice model over time, ensuring it grows with the brand. This long-term relationship with the voice identity is what separates an agency like Botomation from a simple software subscription.

Workflow Integration Strategies

Integrating AI voice cloning with existing content workflows requires a deep understanding of the creator's tech stack. Whether you are using Adobe Premiere, DaVinci Resolve, or a cloud-based CMS, the voice cloning process must be a bridge, not a barrier. We establish quality assurance protocols that include human-in-the-loop reviews. Every piece of localized content is checked by a native speaker of the target language to ensure that the AI-cloned voice is pronouncing local nuances correctly.

Cross-platform consistency is another major focus. A brand's voice should sound the same on a YouTube video as it does in a corporate training module. We monitor and maintain voice model quality across all these touchpoints, showing our clients how to create a consistent brand voice across all platforms for maximum impact across all channels.

Maintaining Brand Authenticity Across Different Applications

Ensuring consistent voice application across various content types is a logistical challenge that AI solves elegantly. A multinational corporation might have marketing videos, internal training modules, and customer support explainers. By using a single, high-fidelity voice clone, the brand maintains a unified front. This consistency builds a subconscious sense of reliability in the mind of the consumer or employee.

Adapting voice cloning for different video formats is about more than just the audio file; it is about the vibe. A fast-paced YouTube Short requires a different vocal energy than a methodical educational tutorial. Our experts at Botomation fine-tune the energy parameters of the voice model for each specific use case. This ensures that the quality control measures stay high, whether the content is intended for a smartphone screen or a cinema-sized presentation.

Multinational corporations are increasingly utilizing consistent voice cloning in their training videos. Imagine a CEO who needs to deliver a message to 50,000 employees across 20 countries. This same technology is revolutionizing corporate training through AI voice cloning for eLearning narration. If the CEO's actual voice is used in every language, the message feels personal and direct. It removes the corporate filter that often makes international communications feel cold and distant.

Application-Specific Voice Adaptation

Maintaining authenticity in different video content formats requires a deep understanding of audience psychology. For creators producing voice cloning for educational YouTube videos, the voice needs to be clear, steady, and encouraging. For marketing content, it needs to be persuasive and dynamic. Technical considerations for these various applications include adjusting the dynamic range of the audio to suit the platform.

How do multinational corporations maintain consistency? By creating a Voice Brand Book—a set of guidelines that dictate how the cloned voice should be used in different scenarios. Our team helps develop these guidelines, ensuring that the brand voice is never applied in a way that feels out of character. This strategic approach is what ensures long-term success in global markets.

Quality Control Across Applications

Standardized quality measures for cloned voice applications are essential for maintaining the illusion of authenticity. We use monitoring systems that flag any deviations from the brand's established vocal profile. If a generated sentence sounds slightly off, it is flagged for manual review. Feedback collection from local markets is also vital; we make micro-adjustments to the model based on how audiences in specific regions respond.

Performance tracking for brand authenticity maintenance involves looking at metrics like average watch time and sentiment analysis of comments in different languages. When a customer hears that specific voice, they should immediately know which brand is speaking, even before they see a logo. If the sentiment is positive and the watch time is high, we know the voice is resonating effectively.

How Can You Localize Your Brand Voice with Botomation?

- Initial Voice Audit: Our experts analyze your existing content to identify the core markers of your voice identity.

- High-Fidelity Data Capture: We guide you through recording a master script designed to capture every nuance of your vocal range.

- Neural Model Training: Our team builds a custom AI model using high-compute clusters, focusing on your specific timbre and prosody.

- Cross-Lingual Mapping: We test the model in your target languages to ensure identity preservation.

- Workflow Integration: We set up an automated pipeline where your new videos are dubbed and cloned within 24-48 hours.

- Continuous Optimization: We monitor audience feedback to keep your voice model sounding fresh and accurate as you grow.

Measuring ROI and Global Brand Consistency with AI Voice Cloning

The business case for AI voice cloning is built on both brand equity and hard numbers. Key metrics for evaluating brand voice consistency include audience retention and brand recall. When an audience hears a consistent voice, they are more likely to remember the message. We have seen international content performance improve significantly when a consistent voice is used compared to generic dubbing. The familiarity factor acts as a shortcut to trust.

Cost savings and efficiency gains are where the ROI becomes undeniable. Traditional dubbing is a linear expense; however, brands can reduce video localization costs by 90% with AI voice cloning via Botomation, where the expense is front-loaded into the creation of the model, and then the cost per video drops dramatically as you scale. The efficiency gains in content localization processes allow brands to move from concept to global rollout in days rather than months, making it easier than ever for testing international market demand with localized video pilots.

Brand Recognition and Engagement Metrics

Tracking audience engagement across voice-consistent content reveals fascinating insights. We often see that watch time increases by 15-25% when a cloned voice is used instead of a generic one. This is because the humanity of the voice keeps people listening. Brand recognition improvements are also measurable, as audiences are more likely to correctly identify a brand if the vocal identity is consistent across all touchpoints.

Audience retention and loyalty metrics are the long-term indicators of success. A creator who uses AI voice cloning to speak directly to their Spanish audience will build a loyal fanbase much faster than one who uses subtitles or a random voice actor. Cross-market brand association measurements show that the personality of the brand becomes a global asset rather than a local one.

Business Impact and Efficiency Measures

Let's look at the numbers. A typical high-quality dubbing project for a 10-minute video might cost $500 per language once you factor in the actor, the studio, and management. If you are targeting 20 languages, that is $10,000 per video. If you produce four videos a month, your annual localization budget is $480,000.

📊 ROI Quick Stats: AI vs. Traditional Dubbing

- Traditional Annual Cost: $480,000 (20 languages, 4 videos/mo)

- Botomation Annual Cost: $60,000 (Includes platform fees and processing)

- Total Annual Savings: $420,000

- Time Efficiency: 1,200+ hours of coordination saved

- Turnaround Time: 24-48 hours vs. 6 weeks

Efficiency gains also mean faster time-to-market. In the current fast-paced environment, you can react to global trends in real-time. Scalability benefits for growing content libraries are massive, allowing you to back-catalogue your entire library into 50 languages for a fraction of previous costs.

Frequently Asked Questions

Does the AI-cloned voice really sound exactly like me?

Yes. By late 2026, neural voice synthesis has reached a point where the timbre and prosody are replicated with 99% accuracy. Most creators find that even their close friends cannot distinguish the clone from the original in blind tests.

How many languages can I dub my content into?

Our team currently supports over 50 languages, including major markets like Spanish, Mandarin, Hindi, Arabic, German, and French, as well as niche regional dialects. Once your Voice Identity Model is created, it can speak any of these languages instantly.

Is my voice data secure?

Security is a top priority. Your voice data is used exclusively for your brand's content and is never shared, sold, or used to train public models. We treat your vocal identity as a sensitive legal asset protected by strict data-privacy protocols.

How long does the setup process take?

The initial Voice Identity Audit and model training typically take 5 to 7 business days. Once your model is live, individual videos can be localized and dubbed in as little as 24 hours, depending on the length and complexity of the content.

Do I need to re-record anything for new videos?

No. This is the primary advantage of partnering with Botomation. Once we have your master voice model, we can generate new audio for any script you write without you ever having to step in front of a microphone again.

AI voice cloning technology provides unprecedented opportunities to maintain an authentic brand identity on a global scale. We have moved past the era of "good enough" translations and into an era of perfect identity preservation. This technology allows the unique characteristics of a creator or a brand spokesperson to transcend language barriers, enabling a scalable international presence that feels deeply personal.

Successful implementation in 2026 requires more than just a tool; it requires a systematic approach to quality control, emotional mapping, and workflow integration. Brands that embrace this new way of localization are seeing higher engagement, lower costs, and a much faster path to global dominance. By keeping the original voice and tone, you aren't just translating content; you are exporting your brand's soul.

Ready to automate your growth? Stop losing international engagement and wasting money on outdated dubbing methods. Partner with our experts to take your brand global while keeping your authentic voice. Book a call below.

Get Started

Book a FREE Consultation Right NOW!

Schedule a Call with Our Team To Make Your Business More Efficient with AI Instantly.

Read More

Global Brand Consistency with AI Voice Cloning

Achieve global brand consistency with AI voice cloning. Localize YouTube content, preserve vocal identity, and scale ROI across 50+ languages easily.

YouTube Brand Consistency International Channels in 2026

Learn how WhatsApp AI slashes support costs for e-commerce & SaaS. Proven strategies to boost sales, recover carts, and scale 24/7 service.