Web Scraping for Industry Trend Analysis - 2026 Strategy

Feb 17, 2026

AI Automation

Market Research

Data Scraping

B2B Sales

AI Automation

Market Research

Data Scraping

B2B Sales

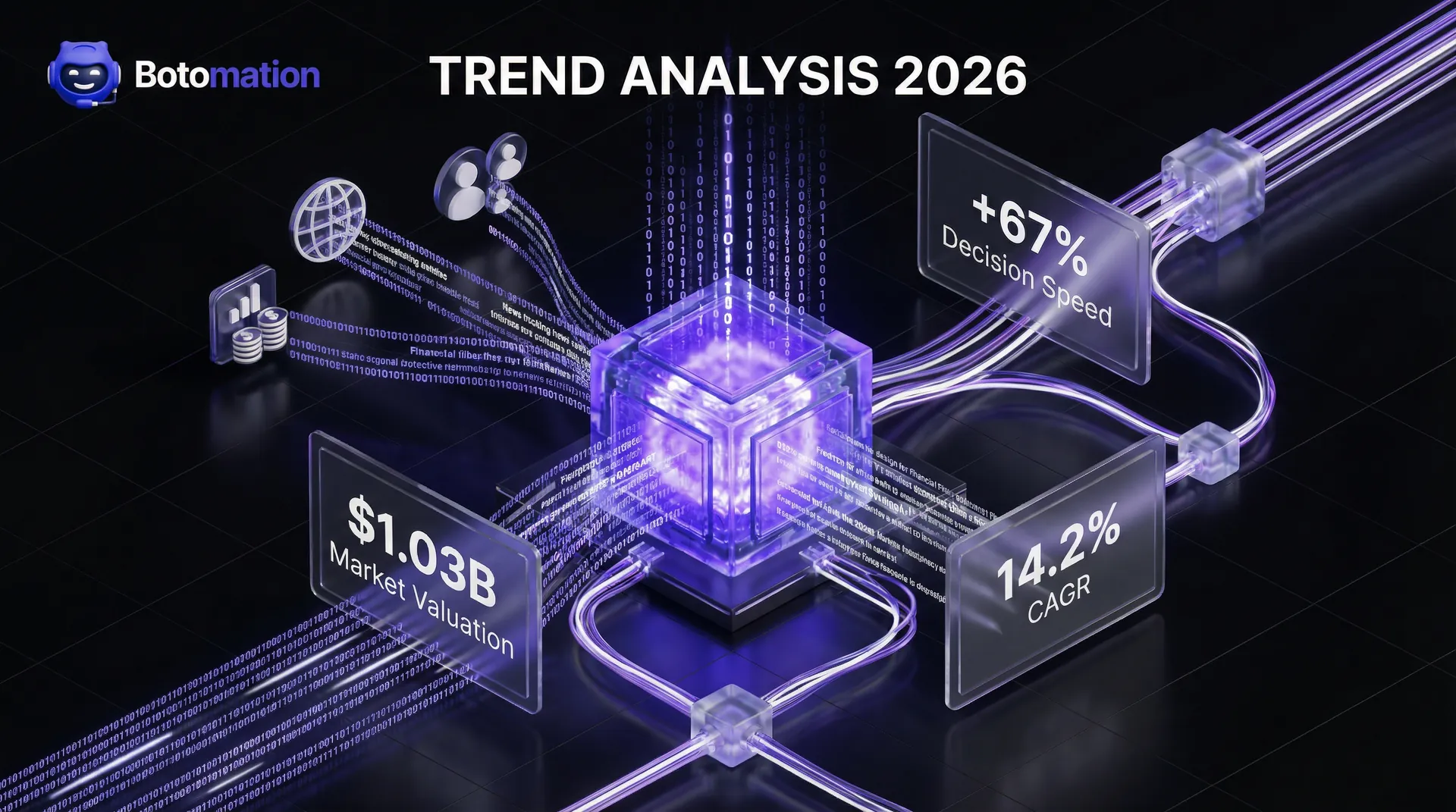

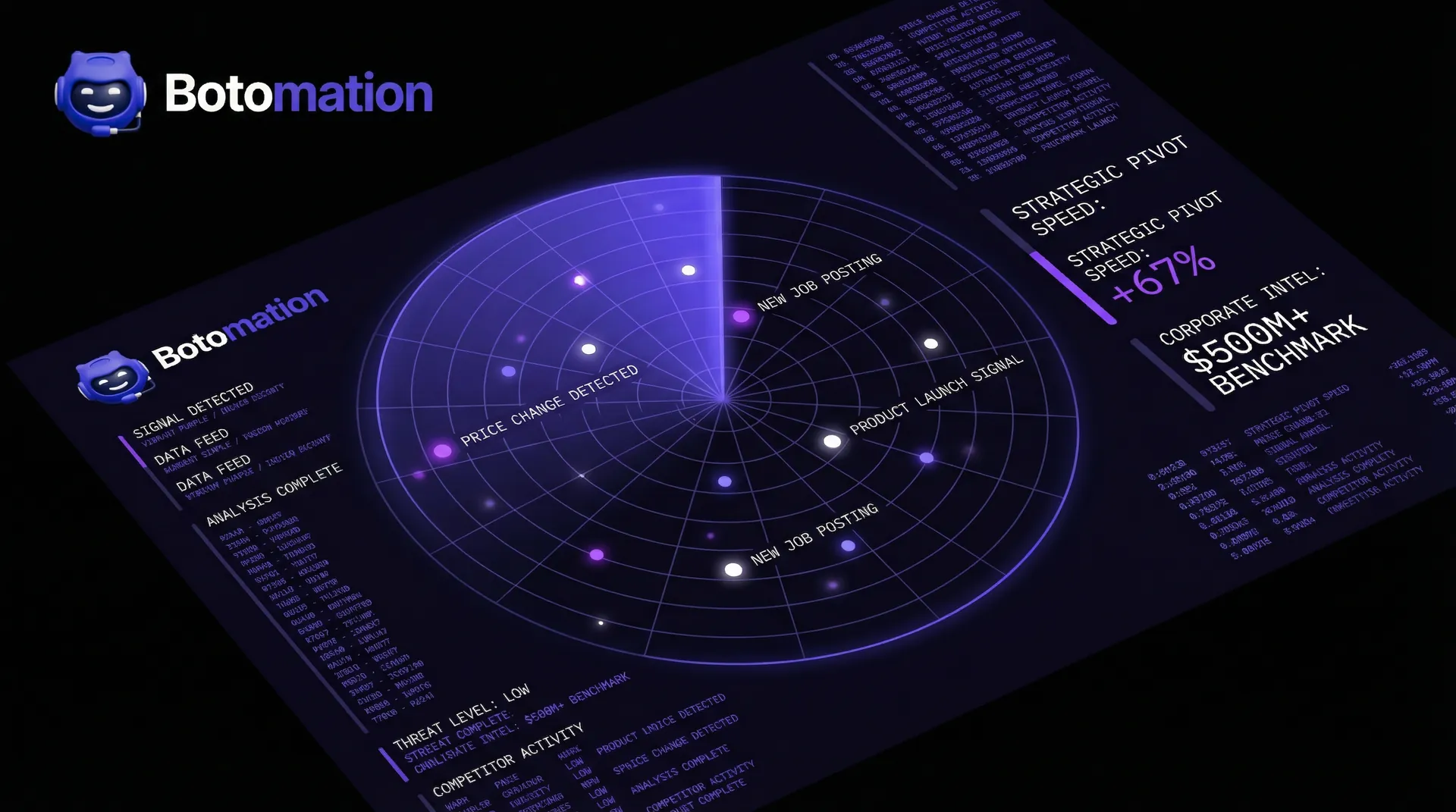

The global web scraping market has officially surpassed the $1.03 billion mark as of early 2026, signaling a massive shift in how organizations manage information. This valuation is not merely a reflection of technical interest; it is a necessity for survival in a market where data decays faster than ever before. Companies that have successfully integrated web scraping for industry trend analysis into their core operations are reporting a 67% increase in decision-making speed compared to those relying on manual cycles. This speed advantage allows firms to pivot strategies before a trend becomes common knowledge, capturing market share while competitors are still reviewing last month's reports.

Major industry leaders like Microsoft and Google are no longer treating market intelligence as a side project, with each investing over $500 million annually into automated platforms. These systems do more than just "look" at the web; they interpret the digital landscape in real-time to predict shifts in consumer behavior and technological adoption. For B2B sales teams and growth marketers, the reliance on stale lead lists and manual searches has become a significant liability, which is why many are learning how to prevent lead list decay to maintain a competitive edge. The modern standard demands a continuous stream of fresh, qualified data that reflects current events, rather than what occurred a fiscal quarter ago. In the high-stakes environment of January 2026, the cost of ignorance is far higher than the cost of automation.

The evolution of these tools has moved beyond simple data extraction into the realm of predictive intelligence. By 2030, the market is expected to reach $2.00 billion, driven by a compound annual growth rate of 14.2%. This growth is fueled by the realization that manual research is no longer scalable or accurate enough to support high-stakes business decisions. As we navigate through the final quarter of 2026, the ability to automate the collection and analysis of industry trends has become the primary differentiator between market leaders and those struggling to keep pace with change.

How Web Scraping for Industry Trend Analysis Works in 2026

Automated trend analysis is the systematic process of using software agents to scan, extract, and interpret data from across the internet to identify patterns. Unlike traditional market research, which often relies on static surveys and historical reports, automated systems provide a living view of the market. Our team at Botomation specializes in building these custom environments, ensuring that the data flowing into your sales pipeline is both relevant and immediate through daily market intelligence automation for B2B sales. This approach removes the human error and fatigue associated with manual browsing, allowing your experts to focus on strategy rather than data entry.

The traditional method of conducting research involves hiring analysts to spend dozens of hours every week scouring news sites, social media, and competitor blogs. This manual approach is not only expensive but inherently flawed; by the time a report is compiled, the data is often two weeks old. In 2026, two weeks is enough time for a competitor to launch a product, adjust their pricing, or capture a trending keyword. Automated systems, by contrast, work 24/7 without interruption, ensuring that every significant move in your industry is captured and categorized the moment it occurs.

The benefits of moving to an automated model extend far beyond simple time savings. When you partner with an agency like Botomation, you gain access to high-fidelity data that has been cleaned, deduplicated, and formatted for immediate use. This means your sales team starts every morning with a list of prospects who have just shown interest in a specific trend or competitors who have just adjusted their service offerings. The growth projections for this sector highlight a clear trend: businesses are moving away from "gut feelings" and toward data-backed certainty.

Market Intelligence Stat Box: The 2026 Data Landscape

* Decision Speed: Automated scraping increases strategic pivot speed by 67%.

* Market Value: The web scraping industry is currently valued at $1.03 billion.

* Projected Growth: Expected to hit $2.00 billion by 2030 (14.2% CAGR).

* Corporate Investment: Top-tier tech firms are spending $500M+ on internal intelligence tools.

* Efficiency Gain: Teams using automated prospecting save an average of 25 hours per week per employee.

Defining Automated Trend Analysis in the Current Year

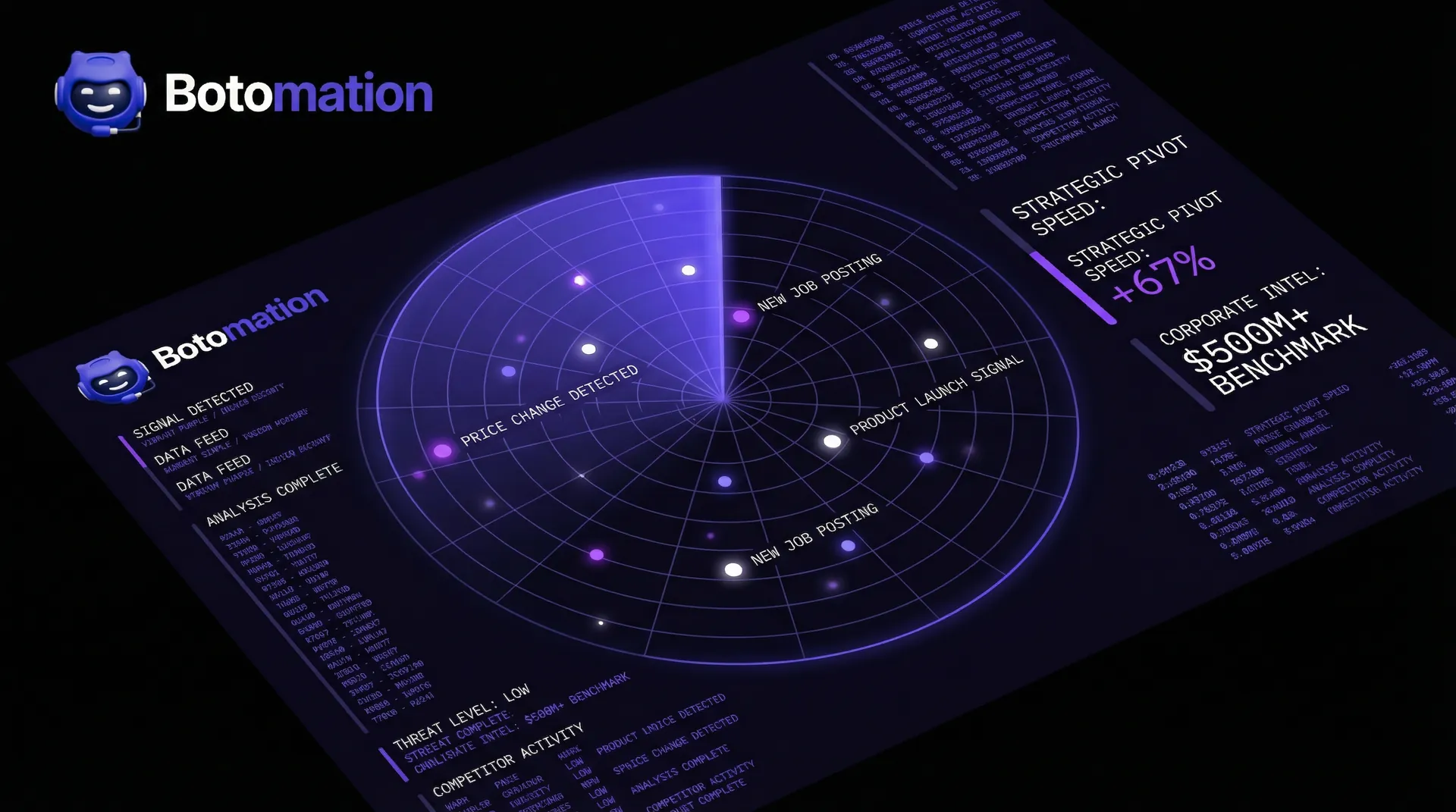

In the context of 2026, automated industry trend analysis is defined as the use of sophisticated web scraping and machine learning to monitor specific market sectors. It involves more than just pulling text from a page; it requires identifying the underlying signals that indicate a shift in the market. For example, a sudden spike in job postings for a specific technical skill across five major competitors is a trend signal that an automated system would catch instantly. A human researcher might miss this because they are looking at individual company pages rather than the aggregate data.

Web scraping acts as the engine for this identification process by providing the raw materials for analysis. Without automated extraction, trend identification is limited to what a person can physically see and remember. With it, you can track thousands of data points across diverse platforms simultaneously. This creates a fundamental difference between "looking for trends" and "monitoring trend data," where the latter is a continuous, systematic operation that feeds directly into business intelligence dashboards.

The Evolution of Market Research Methods

The shift from manual to automated research is perhaps the most significant change in business strategy over the last decade. In the past, market research was a luxury reserved for companies with massive budgets who could afford to wait months for a comprehensive study. Today, the democratization of scraping technology means that even mid-sized agencies can have the same level of insight as a Fortune 500 company. This evolution is driven by the integration of AI models like GPT-5, which can now summarize scraped content with human-level nuance at a fraction of the cost.

Data sources have also evolved from simple news sites to complex, interactive platforms. Modern extraction methods must handle JavaScript-heavy sites, mobile apps, and even encrypted data streams to get a full picture of the market. This complexity is why many firms choose to work with a dedicated agency like Botomation rather than trying to build tools in-house. Our experts manage the technical hurdles of anti-bot measures and proxy rotations, delivering a finished product that allows your team to skip the technical headaches and go straight to the insights.

Strategic Web Scraping for Industry Trend Analysis

Utilizing web scraping for industry trend analysis requires a strategic approach to selecting data sources. Not all data is created equal, and a system that scrapes everything will quickly become overwhelmed with noise. The goal is to identify high-value targets that serve as "early warning systems" for your specific niche. This might include niche trade journals, regulatory filings, or even the "Careers" pages of industry leaders. By focusing on these high-signal areas, the automated system can provide more accurate predictions about where the market is headed.

To maximize the effectiveness of this strategy, businesses must define specific "trigger events." These are pre-determined data patterns that, when detected, initiate a specific business response. For instance, if three major competitors simultaneously update their pricing models for a specific SaaS tier, the system can automatically flag this as a pricing trend, utilizing tools to track competitor pricing automatically. This level of granular monitoring ensures that your team is never caught off guard by sudden market shifts, allowing for proactive rather than reactive management.

Once the data is extracted, the processing phase begins, where raw text is transformed into actionable intelligence. This involves cleaning the data to remove duplicates and irrelevant information, followed by categorization based on your specific business goals. For a B2B sales team, this might mean flagging companies that have just received a new round of funding or those that are expanding into a new territory. The value lies in the transformation of millions of rows of data into a handful of high-priority alerts that your team can act on immediately.

Identifying the Most Valuable Data Sources

The first step in any successful trend monitoring project is mapping the digital ecosystem of your industry. Industry publications and trade journals remain a gold mine for technical shifts and long-term strategic changes. However, social media platforms have become the primary source for emerging, fast-moving trends. Platforms like Reddit and LinkedIn provide a real-time pulse of what professionals are discussing, which often precedes official news reports by weeks.

News websites and press release wires provide the official narrative, but government and regulatory websites often hold the "hidden" data. Patent filings, SEC documents, and municipal contract awards can reveal a competitor's long-term roadmap long before they make a public announcement. Our team ensures that these diverse sources are integrated into a single, cohesive monitoring system. This prevents data silos and gives your growth marketers a holistic view of the competitive landscape.

Advanced Scraping Techniques for Trend Analysis

To get the most out of scraped data, we employ several advanced techniques that go beyond simple text extraction. Sentiment analysis is a key tool here, as it allows us to measure the market's "mood" regarding a specific topic. If mentions of a new technology are increasing but the sentiment is becoming increasingly negative, that is a strong signal that the trend may be a bubble or a failure. This nuance prevents businesses from chasing every "bright shiny object" and helps them focus on trends with genuine staying power.

Frequency analysis and temporal tracking are also vital for understanding the lifecycle of a trend. By measuring how often a topic is mentioned over time, we can determine if it is in an early adoption phase, reaching a peak, or starting to decline. Cross-referencing these findings across multiple platforms—such as seeing a topic move from Reddit to a trade journal—validates the trend's legitimacy. This multi-layered approach ensures that the insights we provide are not just interesting, but statistically significant.

| Feature | Traditional Manual Research | Botomation's Automated Approach |

|---|---|---|

| **Update Frequency** | Monthly or Quarterly | Real-time / Daily |

| **Data Volume** | Limited by human capacity | Millions of data points |

| **Accuracy** | Subject to human bias/error | High-precision programmatic extraction |

| **Cost Efficiency** | High (Expensive labor) | Low (Customized automation) |

| **Lead Quality** | Often stale or outdated | Fresh and highly qualified |

| **Competitive Edge** | Reactive | Proactive and Predictive |

Building a Sturdy Automated Trend Monitoring System

Designing the technical architecture for a trend monitoring system is a task that requires a deep understanding of both software engineering and data science. At Botomation, we favor a microservices architecture for these projects. This approach allows us to separate the scraping logic, the data processing, and the user interface into independent components. If a specific website changes its layout, we only need to update one small part of the system rather than rebuilding the entire platform. This modularity ensures that your market intelligence remains uninterrupted.

Scheduling and frequency are also critical considerations. Some data sources, like stock prices or breaking news, require per-minute updates, while others, like quarterly reports, only need to be checked once every few months. A well-designed system balances these needs to optimize resource usage and avoid being blocked by target websites. We also implement sophisticated data storage solutions, often utilizing time-series databases like TimescaleDB, which are specifically designed to handle the chronological nature of trend data and allow for rapid historical comparisons.

Designing the System Architecture

When deciding between cloud and on-premises deployment, the choice for most modern businesses is clearly the cloud. Cloud-based systems offer the scalability needed to handle sudden spikes in data volume, such as during a major industry event or a global crisis. Our experts typically utilize AWS or Google Cloud to host these systems, ensuring high availability and global reach. This also allows for easier integration with other business tools like your CRM or Slack, where alerts can be sent the moment a trend is detected.

The API design is the final piece of the architecture puzzle. It does not matter how good the data is if your team cannot access it easily. We build custom APIs that allow your existing software to "talk" to the trend monitoring system. This means your sales dashboard can automatically update with new leads, or your marketing software can adjust ad spend based on trending keywords. This level of integration transforms the monitoring system from a standalone tool into a central nervous system for your entire business.

Managing Data Processing Pipelines

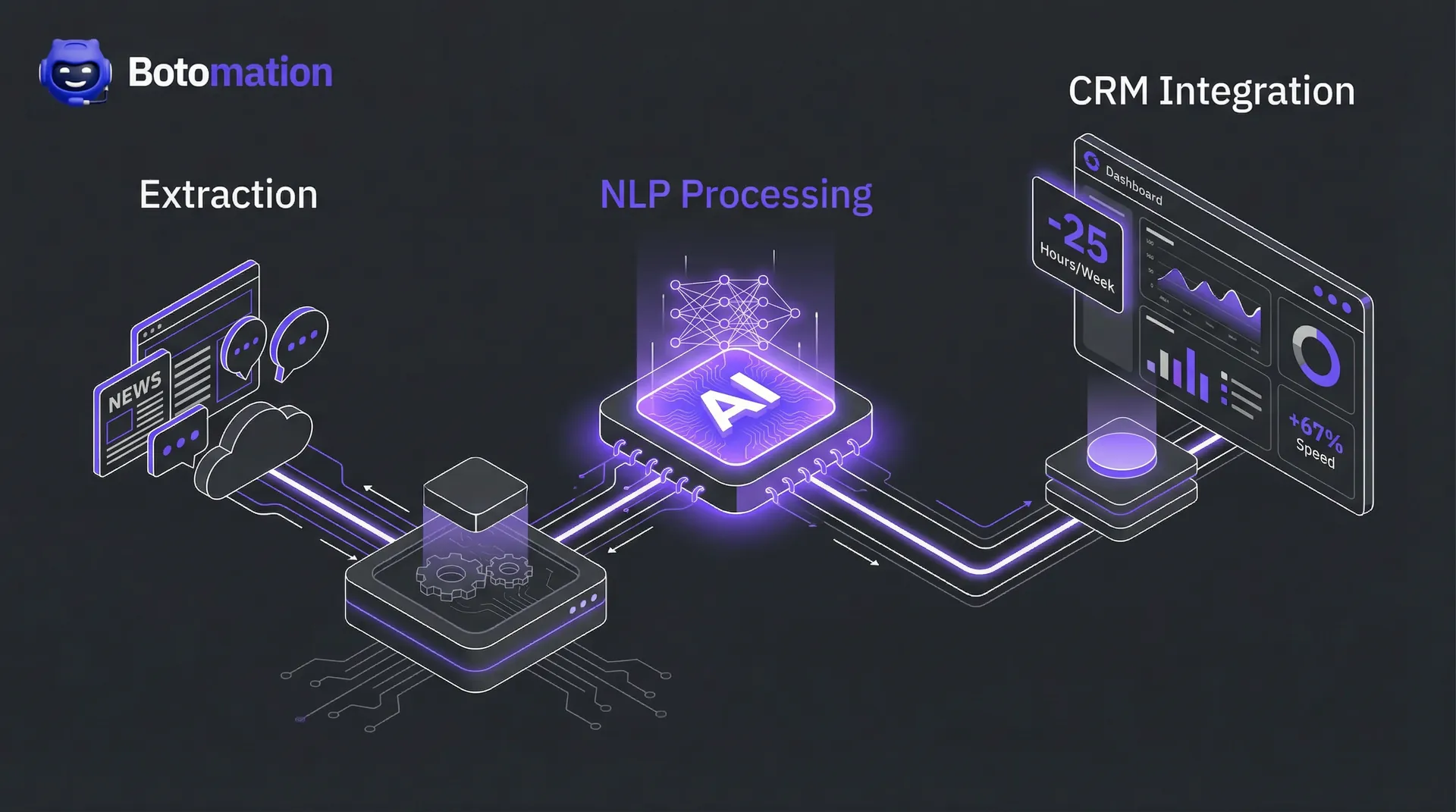

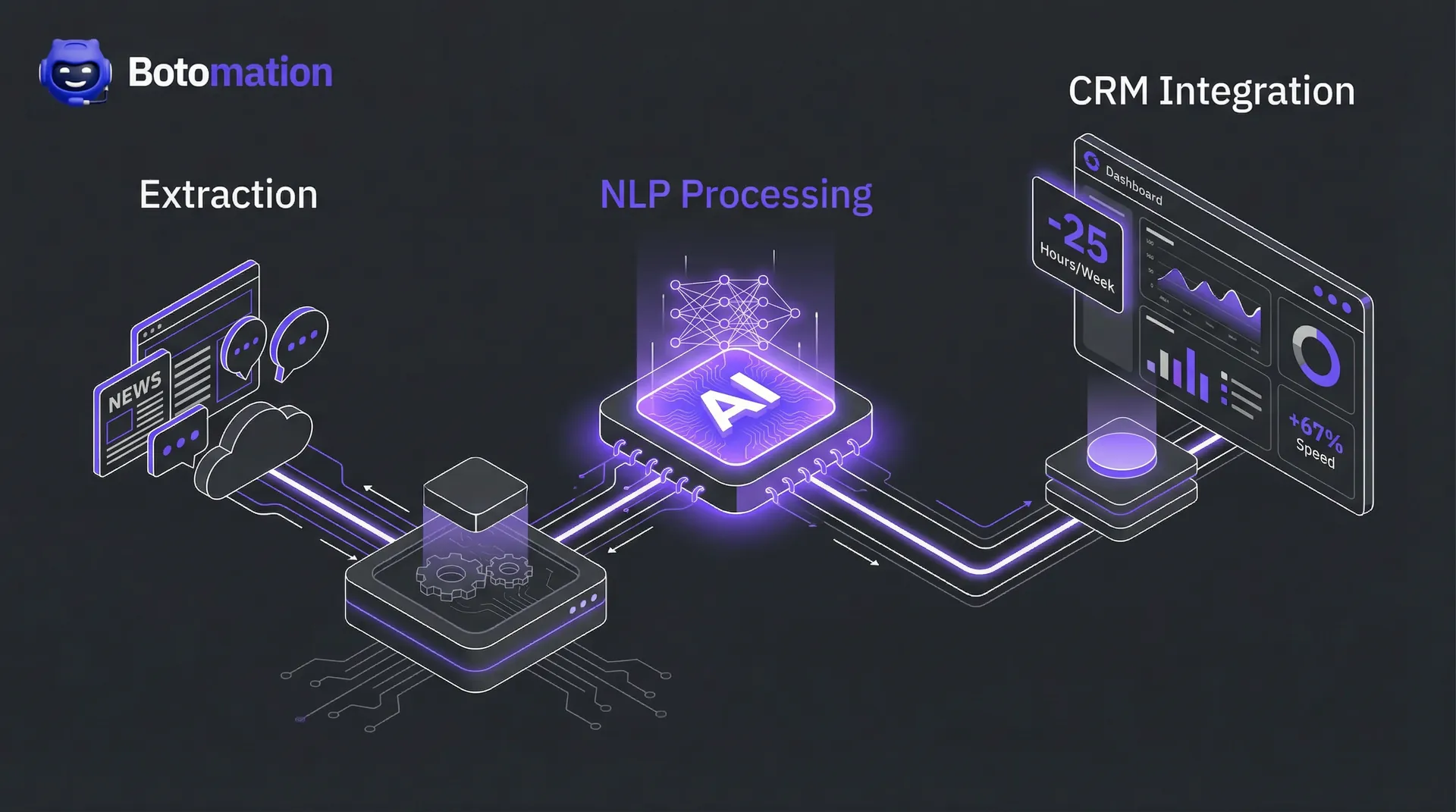

The journey from raw HTML to a polished report happens within the ETL (Extract, Transform, Load) pipeline. This is where the heavy lifting of data cleaning occurs. We use Natural Language Processing (NLP) to classify scraped content into specific categories, such as "Product Launch," "Price Change," or "Executive Move." This classification is what makes the data actionable; it allows a sales manager to filter for only the events that require an immediate follow-up.

Data normalization is another critical step in this process. Because data comes from thousands of different sources, it often arrives in inconsistent formats. Our pipelines automatically standardize dates, currencies, and company names, ensuring that when you compare a news report from the UK with a social media post from the US, the data is perfectly aligned. Quality assurance is built into every step of our pipeline. We use automated validation checks to ensure that the data being extracted is accurate and complete. If a scraper returns an empty field or a nonsensical value, the system flags it for review before it ever reaches your dashboard.

Step-by-Step Tutorial: Setting Up a Basic Trend Monitor

While our team handles the complex heavy lifting, understanding the basic workflow can help you better define your business requirements. Here is how a standard automated monitoring project unfolds:

- Define Your Keywords and Targets: Identify the specific terms, competitors, and websites that are most relevant to your growth.

- Configure the Scraper Logic: Our experts write custom scripts to navigate target sites, bypass security, and extract specific data points.

- Set Up the Cleaning Pipeline: Data is passed through filters to remove HTML tags, ads, and irrelevant content.

- Apply AI Categorization: We use models to determine the intent and category of each piece of data.

- Establish Alert Triggers: Define the "thresholds" that trigger a notification, such as a competitor dropping their price by more than 10%.

- Connect to Your CRM: The final, cleaned data is pushed directly into your sales or marketing tools for immediate action.

Extracting Insights from Social Media and News

Social media has evolved from a place for personal updates into a massive, decentralized focus group. For B2B companies, platforms like LinkedIn and X (formerly Twitter) provide a direct line into the conversations of decision-makers. By scraping these platforms, we can identify "pain points" that are currently affecting your target audience. If a large number of professionals are complaining about a specific feature in a competitor's software, your sales team can use that information to position your product as the superior alternative.

News aggregation is equally important but requires a different technical approach. Unlike social media, news sites often have high-quality, long-form content that needs to be summarized. We use automated summarization tools to condense a 2,000-word industry report into a three-sentence brief for your executives. This allows your leadership team to stay informed on dozens of different topics without spending hours reading every morning. Cross-platform validation is the final step, where we ensure that a trend appearing on social media is being supported by official news sources.

Mastering Social Media Trend Analysis

Scraping LinkedIn and Reddit requires a delicate touch due to their strict anti-scraping measures. Our team uses advanced proxy management and browser fingerprinting to ensure that our agents appear as regular users, preventing IP bans. Once we have access, we focus on engagement metrics like shares, comments, and "upvotes" as indicators of a trend's strength. In 2026, the depth of engagement is often a more reliable predictor of market longevity than simple reach.

Sentiment analysis within social threads also provides a nuanced view of the B2B decision cycle. By tracking how sentiment shifts during the launch phase of a competitor’s product, we can predict the likely churn rate or market reception. Influencer monitoring is another high-value strategy. In most industries, a handful of thought leaders tend to set the agenda. By tracking what these individuals are discussing, we can often predict what the rest of the industry will be discussing in three to six months. This "influencer-led" trend identification is a cornerstone of our automated prospecting service, as it helps you find leads who are following the latest industry advice.

Automated News Extraction and Categorization

The news cycle in 2026 is relentless. To keep up, we build scrapers that monitor thousands of RSS feeds, blogs, and news portals simultaneously. The challenge here is not getting the data; it is categorizing it. We use machine learning models to tag news stories by industry, company, and relevance score. This ensures that a growth marketer in the fintech space is not distracted by news about the healthcare sector unless there is a direct crossover, such as a new payment regulation.

Tracking the evolution of a story is where the real insight lies. We can see how a "breaking news" item on a small tech blog eventually makes its way to the Wall Street Journal. By the time it hits the mainstream media, the opportunity to act on it has often passed. Our systems capture these stories at the "blog" stage, giving you a head start of several days. This temporal advantage is a key component of the "New Way" of doing business that we champion at Botomation.

Monitoring Competitors for Strategic Advantages

Competitor monitoring is perhaps the most direct application of web scraping for industry trend analysis. By following 7 steps to automate competitor intelligence and keeping a constant eye on your rivals, you can see exactly how they are responding to market shifts. Are they hiring more developers? Are they cutting prices on their flagship product? Are they forming new partnerships? These are all signals of their internal strategy. Our custom tools can track these changes across their websites, social profiles, and public filings, providing you with a "shadow" view of their strategic decisions.

Netflix is a classic case study in this regard. They are known to use massive-scale web scraping to monitor not just what people are watching on their own platform, but what is trending on social media and competitor services. This allows them to greenlight shows that align with current cultural trends before those trends peak. While your business might not be a global streaming giant, the same principles apply. Knowing what your competitors are doing allows you to either follow suit or, more importantly, find the gaps they are leaving behind.

Analyzing Competitor Content and Product Moves

A competitor's blog is often a roadmap of their future intentions. When a company starts publishing heavily about a specific topic, it's a clear sign they are preparing to launch a related product or service. Our systems monitor these content shifts and alert you to the change in focus. Similarly, we track product pages for any changes in features, pricing, or documentation. Even a small change in the "Terms of Service" can indicate a shift in how they handle their customers or data.

Resource allocation is another "tell" that we can track. By scraping job boards like Indeed or LinkedIn, we can see which departments a competitor is investing in. If they are suddenly hiring ten new AI researchers, you can be certain an AI-related update is coming. This type of intelligence is invaluable for sales teams, as it allows them to proactively address the "What about [Competitor's] new feature?" question before the customer even asks it.

Tracking the Trend Adoption Timeline

Not all companies adopt trends at the same speed. Some are "early adopters," while others are "laggards." By measuring the response time of your competitors to new industry shifts, we can create a profile of their strategic agility. If a new regulation is announced and Competitor A updates their website within 24 hours while Competitor B takes three weeks, you know exactly who your most dangerous rival is. This data helps you prioritize your own efforts and resources.

Predicting a competitor's next move becomes much easier when you have years of historical trend data to look at. We can identify patterns in their behavior, such as a tendency to launch new products every September or to drop prices whenever a certain market indicator hits a specific level. This predictive capability transforms your sales team from being reactive to being proactive. You aren't just responding to the market; you are anticipating it, thanks to the custom tools built by the Botomation team.

Visualizing Trends for Better Decision Making

The final stage of any automated system is the presentation of data. Raw spreadsheets are rarely useful for high-level decision-makers. Instead, we create interactive dashboards that turn complex data points into visual stories. A line graph showing the rise of a specific keyword over six months is much easier to understand than a list of 5,000 dates and counts. These visualizations allow stakeholders to see the "big picture" at a glance and drill down into the details only when necessary.

Predictive trend analysis is the "holy grail" of this process. By using historical data and current trajectories, we can forecast where a trend will be in six or twelve months. This is not crystal ball gazing; it is statistical modeling based on hard data. Whether you are deciding on next year's budget or planning a new marketing campaign, having a data-backed forecast provides a level of confidence that manual research simply cannot match.

Creating Actionable Trend Reports

A good trend report should do more than just list facts; it should provide a "So What?" for every data point. At Botomation, we design our reporting systems to highlight actionable opportunities. If the data shows a trend is rising, the report should suggest specific actions, such as "Increase ad spend on [Keyword]" or "Target [Competitor's] disgruntled customers." This bridge between data and action is what makes our service a premium agency offering rather than just a technical tool.

Risk assessment is also a key part of our reporting. Not every trend is an opportunity; some are threats. If a new technology is making your current service offering obsolete, you need to know that as early as possible. Our reports include an "Impact Analysis" that ranks trends based on their potential to disrupt your business. This allows you to allocate your defensive resources just as effectively as your offensive ones.

Integrating Intelligence with Business Planning

The ultimate goal of automated market research is to have it integrated so deeply into your business that it becomes invisible. Your planning meetings should start with the latest trend data already on the screen. Your sales team should receive their daily leads without having to log into a separate system. This level of integration is what we strive for with every client. By removing the friction between "knowing" and "doing," we help you build a more agile, responsive, and profitable organization.

In the fast-moving landscape of January 2026, the "Old Way" of manual research is a recipe for stagnation. The "New Way"—partnering with experts to build custom, automated intelligence systems—is the only way to ensure consistent growth. As the volume of data on the web continues to explode, the value of having a system that can filter, analyze, and present that data in real-time will only increase.

Frequently Asked Questions

How does web scraping for industry trend analysis differ from using a standard SEO tool?

Standard SEO tools focus primarily on keywords and search rankings, which are reactive metrics. Web scraping for industry trend analysis is much broader; it utilizes web scraping for competitor intelligence to monitor news, social media, competitor job boards, and regulatory filings to find the reasons behind keyword shifts before they happen. It provides a holistic view of market strategy, not just search engine performance.

Is automated web scraping legal for monitoring competitors?

Yes, as long as it is conducted ethically and in compliance with data privacy laws like GDPR and CCPA. Our team at Botomation follows industry best practices, focusing on publicly available data and respecting robots.txt files where applicable. We ensure that the data collection process is robust, transparent, and legally sound, so your business remains protected.

How much time can my team really save by automating this process?

On average, a B2B sales team of five people can save upwards of 100 hours per month. Instead of each person spending five hours a week on manual research, they receive a curated list of fresh leads and market insights every morning. This allows them to spend 100% of their time on high-value activities like closing deals and building relationships.

Can these tools handle websites with complex security or "anti-bot" measures?

Absolutely. This is one of the primary reasons companies partner with Botomation rather than trying to use off-the-shelf software. Our experts use advanced techniques like residential proxy rotation, headless browser management, and behavioral simulation to ensure our scrapers are indistinguishable from human visitors, allowing us to gather data from even the most protected sites.

How often is the data updated in a custom monitoring system?

The frequency is entirely up to you. We can build systems that update in real-time for fast-moving news and social trends, or systems that run daily or weekly for broader market shifts. Most of our clients find that a daily "morning brief" provides the perfect balance of freshness and actionability for their sales and marketing teams.

What kind of ROI can I expect from automated trend analysis?

Most clients see a return on investment within the first quarter. By identifying market shifts 67% faster, businesses can capture early-adopter market share and avoid investing in declining trends. Additionally, the reduction in manual labor costs typically covers the cost of the automation system within months.

Automated industry trend analysis provides businesses with significant competitive advantages in fast-moving markets. Success requires careful planning of technical architecture, data sources, and analysis methodologies. By moving away from the slow, manual processes of the past and embracing the custom, automated solutions of the future, you position your company at the forefront of your industry.

Ready to automate your growth? Stop losing money to manual research and outdated lead lists today. Book a call below and let our experts build the market intelligence system your business deserves.

The global web scraping market has officially surpassed the $1.03 billion mark as of early 2026, signaling a massive shift in how organizations manage information. This valuation is not merely a reflection of technical interest; it is a necessity for survival in a market where data decays faster than ever before. Companies that have successfully integrated web scraping for industry trend analysis into their core operations are reporting a 67% increase in decision-making speed compared to those relying on manual cycles. This speed advantage allows firms to pivot strategies before a trend becomes common knowledge, capturing market share while competitors are still reviewing last month's reports.

Major industry leaders like Microsoft and Google are no longer treating market intelligence as a side project, with each investing over $500 million annually into automated platforms. These systems do more than just "look" at the web; they interpret the digital landscape in real-time to predict shifts in consumer behavior and technological adoption. For B2B sales teams and growth marketers, the reliance on stale lead lists and manual searches has become a significant liability, which is why many are learning how to prevent lead list decay to maintain a competitive edge. The modern standard demands a continuous stream of fresh, qualified data that reflects current events, rather than what occurred a fiscal quarter ago. In the high-stakes environment of January 2026, the cost of ignorance is far higher than the cost of automation.

The evolution of these tools has moved beyond simple data extraction into the realm of predictive intelligence. By 2030, the market is expected to reach $2.00 billion, driven by a compound annual growth rate of 14.2%. This growth is fueled by the realization that manual research is no longer scalable or accurate enough to support high-stakes business decisions. As we navigate through the final quarter of 2026, the ability to automate the collection and analysis of industry trends has become the primary differentiator between market leaders and those struggling to keep pace with change.

How Web Scraping for Industry Trend Analysis Works in 2026

Automated trend analysis is the systematic process of using software agents to scan, extract, and interpret data from across the internet to identify patterns. Unlike traditional market research, which often relies on static surveys and historical reports, automated systems provide a living view of the market. Our team at Botomation specializes in building these custom environments, ensuring that the data flowing into your sales pipeline is both relevant and immediate through daily market intelligence automation for B2B sales. This approach removes the human error and fatigue associated with manual browsing, allowing your experts to focus on strategy rather than data entry.

The traditional method of conducting research involves hiring analysts to spend dozens of hours every week scouring news sites, social media, and competitor blogs. This manual approach is not only expensive but inherently flawed; by the time a report is compiled, the data is often two weeks old. In 2026, two weeks is enough time for a competitor to launch a product, adjust their pricing, or capture a trending keyword. Automated systems, by contrast, work 24/7 without interruption, ensuring that every significant move in your industry is captured and categorized the moment it occurs.

The benefits of moving to an automated model extend far beyond simple time savings. When you partner with an agency like Botomation, you gain access to high-fidelity data that has been cleaned, deduplicated, and formatted for immediate use. This means your sales team starts every morning with a list of prospects who have just shown interest in a specific trend or competitors who have just adjusted their service offerings. The growth projections for this sector highlight a clear trend: businesses are moving away from "gut feelings" and toward data-backed certainty.

Market Intelligence Stat Box: The 2026 Data Landscape

* Decision Speed: Automated scraping increases strategic pivot speed by 67%.

* Market Value: The web scraping industry is currently valued at $1.03 billion.

* Projected Growth: Expected to hit $2.00 billion by 2030 (14.2% CAGR).

* Corporate Investment: Top-tier tech firms are spending $500M+ on internal intelligence tools.

* Efficiency Gain: Teams using automated prospecting save an average of 25 hours per week per employee.

Defining Automated Trend Analysis in the Current Year

In the context of 2026, automated industry trend analysis is defined as the use of sophisticated web scraping and machine learning to monitor specific market sectors. It involves more than just pulling text from a page; it requires identifying the underlying signals that indicate a shift in the market. For example, a sudden spike in job postings for a specific technical skill across five major competitors is a trend signal that an automated system would catch instantly. A human researcher might miss this because they are looking at individual company pages rather than the aggregate data.

Web scraping acts as the engine for this identification process by providing the raw materials for analysis. Without automated extraction, trend identification is limited to what a person can physically see and remember. With it, you can track thousands of data points across diverse platforms simultaneously. This creates a fundamental difference between "looking for trends" and "monitoring trend data," where the latter is a continuous, systematic operation that feeds directly into business intelligence dashboards.

The Evolution of Market Research Methods

The shift from manual to automated research is perhaps the most significant change in business strategy over the last decade. In the past, market research was a luxury reserved for companies with massive budgets who could afford to wait months for a comprehensive study. Today, the democratization of scraping technology means that even mid-sized agencies can have the same level of insight as a Fortune 500 company. This evolution is driven by the integration of AI models like GPT-5, which can now summarize scraped content with human-level nuance at a fraction of the cost.

Data sources have also evolved from simple news sites to complex, interactive platforms. Modern extraction methods must handle JavaScript-heavy sites, mobile apps, and even encrypted data streams to get a full picture of the market. This complexity is why many firms choose to work with a dedicated agency like Botomation rather than trying to build tools in-house. Our experts manage the technical hurdles of anti-bot measures and proxy rotations, delivering a finished product that allows your team to skip the technical headaches and go straight to the insights.

Strategic Web Scraping for Industry Trend Analysis

Utilizing web scraping for industry trend analysis requires a strategic approach to selecting data sources. Not all data is created equal, and a system that scrapes everything will quickly become overwhelmed with noise. The goal is to identify high-value targets that serve as "early warning systems" for your specific niche. This might include niche trade journals, regulatory filings, or even the "Careers" pages of industry leaders. By focusing on these high-signal areas, the automated system can provide more accurate predictions about where the market is headed.

To maximize the effectiveness of this strategy, businesses must define specific "trigger events." These are pre-determined data patterns that, when detected, initiate a specific business response. For instance, if three major competitors simultaneously update their pricing models for a specific SaaS tier, the system can automatically flag this as a pricing trend, utilizing tools to track competitor pricing automatically. This level of granular monitoring ensures that your team is never caught off guard by sudden market shifts, allowing for proactive rather than reactive management.

Once the data is extracted, the processing phase begins, where raw text is transformed into actionable intelligence. This involves cleaning the data to remove duplicates and irrelevant information, followed by categorization based on your specific business goals. For a B2B sales team, this might mean flagging companies that have just received a new round of funding or those that are expanding into a new territory. The value lies in the transformation of millions of rows of data into a handful of high-priority alerts that your team can act on immediately.

Identifying the Most Valuable Data Sources

The first step in any successful trend monitoring project is mapping the digital ecosystem of your industry. Industry publications and trade journals remain a gold mine for technical shifts and long-term strategic changes. However, social media platforms have become the primary source for emerging, fast-moving trends. Platforms like Reddit and LinkedIn provide a real-time pulse of what professionals are discussing, which often precedes official news reports by weeks.

News websites and press release wires provide the official narrative, but government and regulatory websites often hold the "hidden" data. Patent filings, SEC documents, and municipal contract awards can reveal a competitor's long-term roadmap long before they make a public announcement. Our team ensures that these diverse sources are integrated into a single, cohesive monitoring system. This prevents data silos and gives your growth marketers a holistic view of the competitive landscape.

Advanced Scraping Techniques for Trend Analysis

To get the most out of scraped data, we employ several advanced techniques that go beyond simple text extraction. Sentiment analysis is a key tool here, as it allows us to measure the market's "mood" regarding a specific topic. If mentions of a new technology are increasing but the sentiment is becoming increasingly negative, that is a strong signal that the trend may be a bubble or a failure. This nuance prevents businesses from chasing every "bright shiny object" and helps them focus on trends with genuine staying power.

Frequency analysis and temporal tracking are also vital for understanding the lifecycle of a trend. By measuring how often a topic is mentioned over time, we can determine if it is in an early adoption phase, reaching a peak, or starting to decline. Cross-referencing these findings across multiple platforms—such as seeing a topic move from Reddit to a trade journal—validates the trend's legitimacy. This multi-layered approach ensures that the insights we provide are not just interesting, but statistically significant.

| Feature | Traditional Manual Research | Botomation's Automated Approach |

|---|---|---|

| **Update Frequency** | Monthly or Quarterly | Real-time / Daily |

| **Data Volume** | Limited by human capacity | Millions of data points |

| **Accuracy** | Subject to human bias/error | High-precision programmatic extraction |

| **Cost Efficiency** | High (Expensive labor) | Low (Customized automation) |

| **Lead Quality** | Often stale or outdated | Fresh and highly qualified |

| **Competitive Edge** | Reactive | Proactive and Predictive |

Building a Sturdy Automated Trend Monitoring System

Designing the technical architecture for a trend monitoring system is a task that requires a deep understanding of both software engineering and data science. At Botomation, we favor a microservices architecture for these projects. This approach allows us to separate the scraping logic, the data processing, and the user interface into independent components. If a specific website changes its layout, we only need to update one small part of the system rather than rebuilding the entire platform. This modularity ensures that your market intelligence remains uninterrupted.

Scheduling and frequency are also critical considerations. Some data sources, like stock prices or breaking news, require per-minute updates, while others, like quarterly reports, only need to be checked once every few months. A well-designed system balances these needs to optimize resource usage and avoid being blocked by target websites. We also implement sophisticated data storage solutions, often utilizing time-series databases like TimescaleDB, which are specifically designed to handle the chronological nature of trend data and allow for rapid historical comparisons.

Designing the System Architecture

When deciding between cloud and on-premises deployment, the choice for most modern businesses is clearly the cloud. Cloud-based systems offer the scalability needed to handle sudden spikes in data volume, such as during a major industry event or a global crisis. Our experts typically utilize AWS or Google Cloud to host these systems, ensuring high availability and global reach. This also allows for easier integration with other business tools like your CRM or Slack, where alerts can be sent the moment a trend is detected.

The API design is the final piece of the architecture puzzle. It does not matter how good the data is if your team cannot access it easily. We build custom APIs that allow your existing software to "talk" to the trend monitoring system. This means your sales dashboard can automatically update with new leads, or your marketing software can adjust ad spend based on trending keywords. This level of integration transforms the monitoring system from a standalone tool into a central nervous system for your entire business.

Managing Data Processing Pipelines

The journey from raw HTML to a polished report happens within the ETL (Extract, Transform, Load) pipeline. This is where the heavy lifting of data cleaning occurs. We use Natural Language Processing (NLP) to classify scraped content into specific categories, such as "Product Launch," "Price Change," or "Executive Move." This classification is what makes the data actionable; it allows a sales manager to filter for only the events that require an immediate follow-up.

Data normalization is another critical step in this process. Because data comes from thousands of different sources, it often arrives in inconsistent formats. Our pipelines automatically standardize dates, currencies, and company names, ensuring that when you compare a news report from the UK with a social media post from the US, the data is perfectly aligned. Quality assurance is built into every step of our pipeline. We use automated validation checks to ensure that the data being extracted is accurate and complete. If a scraper returns an empty field or a nonsensical value, the system flags it for review before it ever reaches your dashboard.

Step-by-Step Tutorial: Setting Up a Basic Trend Monitor

While our team handles the complex heavy lifting, understanding the basic workflow can help you better define your business requirements. Here is how a standard automated monitoring project unfolds:

- Define Your Keywords and Targets: Identify the specific terms, competitors, and websites that are most relevant to your growth.

- Configure the Scraper Logic: Our experts write custom scripts to navigate target sites, bypass security, and extract specific data points.

- Set Up the Cleaning Pipeline: Data is passed through filters to remove HTML tags, ads, and irrelevant content.

- Apply AI Categorization: We use models to determine the intent and category of each piece of data.

- Establish Alert Triggers: Define the "thresholds" that trigger a notification, such as a competitor dropping their price by more than 10%.

- Connect to Your CRM: The final, cleaned data is pushed directly into your sales or marketing tools for immediate action.

Extracting Insights from Social Media and News

Social media has evolved from a place for personal updates into a massive, decentralized focus group. For B2B companies, platforms like LinkedIn and X (formerly Twitter) provide a direct line into the conversations of decision-makers. By scraping these platforms, we can identify "pain points" that are currently affecting your target audience. If a large number of professionals are complaining about a specific feature in a competitor's software, your sales team can use that information to position your product as the superior alternative.

News aggregation is equally important but requires a different technical approach. Unlike social media, news sites often have high-quality, long-form content that needs to be summarized. We use automated summarization tools to condense a 2,000-word industry report into a three-sentence brief for your executives. This allows your leadership team to stay informed on dozens of different topics without spending hours reading every morning. Cross-platform validation is the final step, where we ensure that a trend appearing on social media is being supported by official news sources.

Mastering Social Media Trend Analysis

Scraping LinkedIn and Reddit requires a delicate touch due to their strict anti-scraping measures. Our team uses advanced proxy management and browser fingerprinting to ensure that our agents appear as regular users, preventing IP bans. Once we have access, we focus on engagement metrics like shares, comments, and "upvotes" as indicators of a trend's strength. In 2026, the depth of engagement is often a more reliable predictor of market longevity than simple reach.

Sentiment analysis within social threads also provides a nuanced view of the B2B decision cycle. By tracking how sentiment shifts during the launch phase of a competitor’s product, we can predict the likely churn rate or market reception. Influencer monitoring is another high-value strategy. In most industries, a handful of thought leaders tend to set the agenda. By tracking what these individuals are discussing, we can often predict what the rest of the industry will be discussing in three to six months. This "influencer-led" trend identification is a cornerstone of our automated prospecting service, as it helps you find leads who are following the latest industry advice.

Automated News Extraction and Categorization

The news cycle in 2026 is relentless. To keep up, we build scrapers that monitor thousands of RSS feeds, blogs, and news portals simultaneously. The challenge here is not getting the data; it is categorizing it. We use machine learning models to tag news stories by industry, company, and relevance score. This ensures that a growth marketer in the fintech space is not distracted by news about the healthcare sector unless there is a direct crossover, such as a new payment regulation.

Tracking the evolution of a story is where the real insight lies. We can see how a "breaking news" item on a small tech blog eventually makes its way to the Wall Street Journal. By the time it hits the mainstream media, the opportunity to act on it has often passed. Our systems capture these stories at the "blog" stage, giving you a head start of several days. This temporal advantage is a key component of the "New Way" of doing business that we champion at Botomation.

Monitoring Competitors for Strategic Advantages

Competitor monitoring is perhaps the most direct application of web scraping for industry trend analysis. By following 7 steps to automate competitor intelligence and keeping a constant eye on your rivals, you can see exactly how they are responding to market shifts. Are they hiring more developers? Are they cutting prices on their flagship product? Are they forming new partnerships? These are all signals of their internal strategy. Our custom tools can track these changes across their websites, social profiles, and public filings, providing you with a "shadow" view of their strategic decisions.

Netflix is a classic case study in this regard. They are known to use massive-scale web scraping to monitor not just what people are watching on their own platform, but what is trending on social media and competitor services. This allows them to greenlight shows that align with current cultural trends before those trends peak. While your business might not be a global streaming giant, the same principles apply. Knowing what your competitors are doing allows you to either follow suit or, more importantly, find the gaps they are leaving behind.

Analyzing Competitor Content and Product Moves

A competitor's blog is often a roadmap of their future intentions. When a company starts publishing heavily about a specific topic, it's a clear sign they are preparing to launch a related product or service. Our systems monitor these content shifts and alert you to the change in focus. Similarly, we track product pages for any changes in features, pricing, or documentation. Even a small change in the "Terms of Service" can indicate a shift in how they handle their customers or data.

Resource allocation is another "tell" that we can track. By scraping job boards like Indeed or LinkedIn, we can see which departments a competitor is investing in. If they are suddenly hiring ten new AI researchers, you can be certain an AI-related update is coming. This type of intelligence is invaluable for sales teams, as it allows them to proactively address the "What about [Competitor's] new feature?" question before the customer even asks it.

Tracking the Trend Adoption Timeline

Not all companies adopt trends at the same speed. Some are "early adopters," while others are "laggards." By measuring the response time of your competitors to new industry shifts, we can create a profile of their strategic agility. If a new regulation is announced and Competitor A updates their website within 24 hours while Competitor B takes three weeks, you know exactly who your most dangerous rival is. This data helps you prioritize your own efforts and resources.

Predicting a competitor's next move becomes much easier when you have years of historical trend data to look at. We can identify patterns in their behavior, such as a tendency to launch new products every September or to drop prices whenever a certain market indicator hits a specific level. This predictive capability transforms your sales team from being reactive to being proactive. You aren't just responding to the market; you are anticipating it, thanks to the custom tools built by the Botomation team.

Visualizing Trends for Better Decision Making

The final stage of any automated system is the presentation of data. Raw spreadsheets are rarely useful for high-level decision-makers. Instead, we create interactive dashboards that turn complex data points into visual stories. A line graph showing the rise of a specific keyword over six months is much easier to understand than a list of 5,000 dates and counts. These visualizations allow stakeholders to see the "big picture" at a glance and drill down into the details only when necessary.

Predictive trend analysis is the "holy grail" of this process. By using historical data and current trajectories, we can forecast where a trend will be in six or twelve months. This is not crystal ball gazing; it is statistical modeling based on hard data. Whether you are deciding on next year's budget or planning a new marketing campaign, having a data-backed forecast provides a level of confidence that manual research simply cannot match.

Creating Actionable Trend Reports

A good trend report should do more than just list facts; it should provide a "So What?" for every data point. At Botomation, we design our reporting systems to highlight actionable opportunities. If the data shows a trend is rising, the report should suggest specific actions, such as "Increase ad spend on [Keyword]" or "Target [Competitor's] disgruntled customers." This bridge between data and action is what makes our service a premium agency offering rather than just a technical tool.

Risk assessment is also a key part of our reporting. Not every trend is an opportunity; some are threats. If a new technology is making your current service offering obsolete, you need to know that as early as possible. Our reports include an "Impact Analysis" that ranks trends based on their potential to disrupt your business. This allows you to allocate your defensive resources just as effectively as your offensive ones.

Integrating Intelligence with Business Planning

The ultimate goal of automated market research is to have it integrated so deeply into your business that it becomes invisible. Your planning meetings should start with the latest trend data already on the screen. Your sales team should receive their daily leads without having to log into a separate system. This level of integration is what we strive for with every client. By removing the friction between "knowing" and "doing," we help you build a more agile, responsive, and profitable organization.

In the fast-moving landscape of January 2026, the "Old Way" of manual research is a recipe for stagnation. The "New Way"—partnering with experts to build custom, automated intelligence systems—is the only way to ensure consistent growth. As the volume of data on the web continues to explode, the value of having a system that can filter, analyze, and present that data in real-time will only increase.

Frequently Asked Questions

How does web scraping for industry trend analysis differ from using a standard SEO tool?

Standard SEO tools focus primarily on keywords and search rankings, which are reactive metrics. Web scraping for industry trend analysis is much broader; it utilizes web scraping for competitor intelligence to monitor news, social media, competitor job boards, and regulatory filings to find the reasons behind keyword shifts before they happen. It provides a holistic view of market strategy, not just search engine performance.

Is automated web scraping legal for monitoring competitors?

Yes, as long as it is conducted ethically and in compliance with data privacy laws like GDPR and CCPA. Our team at Botomation follows industry best practices, focusing on publicly available data and respecting robots.txt files where applicable. We ensure that the data collection process is robust, transparent, and legally sound, so your business remains protected.

How much time can my team really save by automating this process?

On average, a B2B sales team of five people can save upwards of 100 hours per month. Instead of each person spending five hours a week on manual research, they receive a curated list of fresh leads and market insights every morning. This allows them to spend 100% of their time on high-value activities like closing deals and building relationships.

Can these tools handle websites with complex security or "anti-bot" measures?

Absolutely. This is one of the primary reasons companies partner with Botomation rather than trying to use off-the-shelf software. Our experts use advanced techniques like residential proxy rotation, headless browser management, and behavioral simulation to ensure our scrapers are indistinguishable from human visitors, allowing us to gather data from even the most protected sites.

How often is the data updated in a custom monitoring system?

The frequency is entirely up to you. We can build systems that update in real-time for fast-moving news and social trends, or systems that run daily or weekly for broader market shifts. Most of our clients find that a daily "morning brief" provides the perfect balance of freshness and actionability for their sales and marketing teams.

What kind of ROI can I expect from automated trend analysis?

Most clients see a return on investment within the first quarter. By identifying market shifts 67% faster, businesses can capture early-adopter market share and avoid investing in declining trends. Additionally, the reduction in manual labor costs typically covers the cost of the automation system within months.

Automated industry trend analysis provides businesses with significant competitive advantages in fast-moving markets. Success requires careful planning of technical architecture, data sources, and analysis methodologies. By moving away from the slow, manual processes of the past and embracing the custom, automated solutions of the future, you position your company at the forefront of your industry.

Ready to automate your growth? Stop losing money to manual research and outdated lead lists today. Book a call below and let our experts build the market intelligence system your business deserves.

Get Started

Book a FREE Consultation Right NOW!

Schedule a Call with Our Team To Make Your Business More Efficient with AI Instantly.

Read More

Web Scraping for Industry Trend Analysis - 2026 Strategy

Leverage web scraping for industry trend analysis to find leads. Automate market research, track competitors, and scale B2B sales with Botomation.

Web Scraping for Competitor Intelligence - 2026 Guide

Learn how WhatsApp AI slashes support costs for e-commerce & SaaS. Proven strategies to boost sales, recover carts, and scale 24/7 service.